publications

Reverse-chronological. See Google Scholar for the most up-to-date list.

2026

- preprint

MLPs are Efficient Distilled Generative RecommendersZitian Guo, Yupeng Hou, Clark Mingxuan Ju, Neil Shah, and Julian McAuleyarXiv preprint, 2026

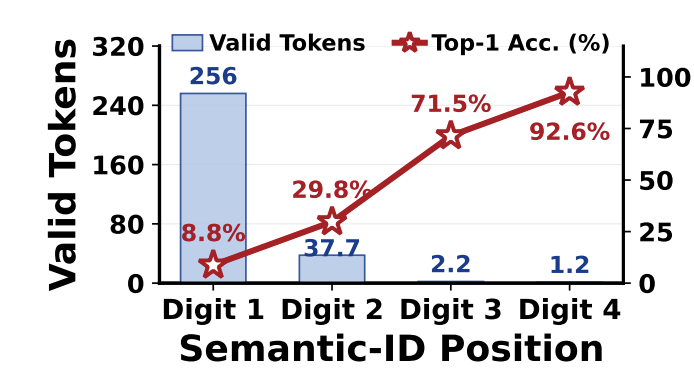

MLPs are Efficient Distilled Generative RecommendersZitian Guo, Yupeng Hou, Clark Mingxuan Ju, Neil Shah, and Julian McAuleyarXiv preprint, 2026Generative recommendation models employing Semantic IDs (SIDs) exhibit strong potential, yet their practical deployment is bottlenecked by the high inference latency of beam-expanded autoregressive decoding. In this work, we identify that standard attention-heavy Transformer decoders represent a structural overkill for this task: the hierarchical nature of SIDs makes prediction difficulty drops sharply after the first token, rendering repeated attention computations highly redundant. Driven by this insight, we propose SID-MLP, a lightweight MLP-centric distillation framework that fundamentally simplifies the decoding paradigm for GR. Instead of executing complex, step-by-step attention mechanisms, our approach captures the global user context in a single operation, decoupled from sequential token prediction. We then distill the heavy autoregressive teacher into position-specific MLP heads, eliminating the dense attention overhead while preserving prefix and context dependencies. Extensive experiments demonstrate that SID-MLP matches the accuracy of teacher models while accelerating inference by 8.74x. Crucially, this distillation strategy can serve as a plug-and-play accelerator for different backbones and tokenizer settings. Furthermore, we introduce SID-MLP++, extending our distillation framework to replace the Transformer encoder, unlocking further latency reductions. Ultimately, our work reveals that decoder-side MLPs distillation is an effective acceleration path for structured SID recommendation, while full encoder replacement offers an additional speed–accuracy trade-off.

- preprint

CoSearch: Joint Training of Reasoning and Document Ranking via Reinforcement Learning for Agentic SearchHansi Zeng, Liam Collins, Bhuvesh Kumar, Neil Shah, and Hamed ZamaniarXiv preprint, 2026

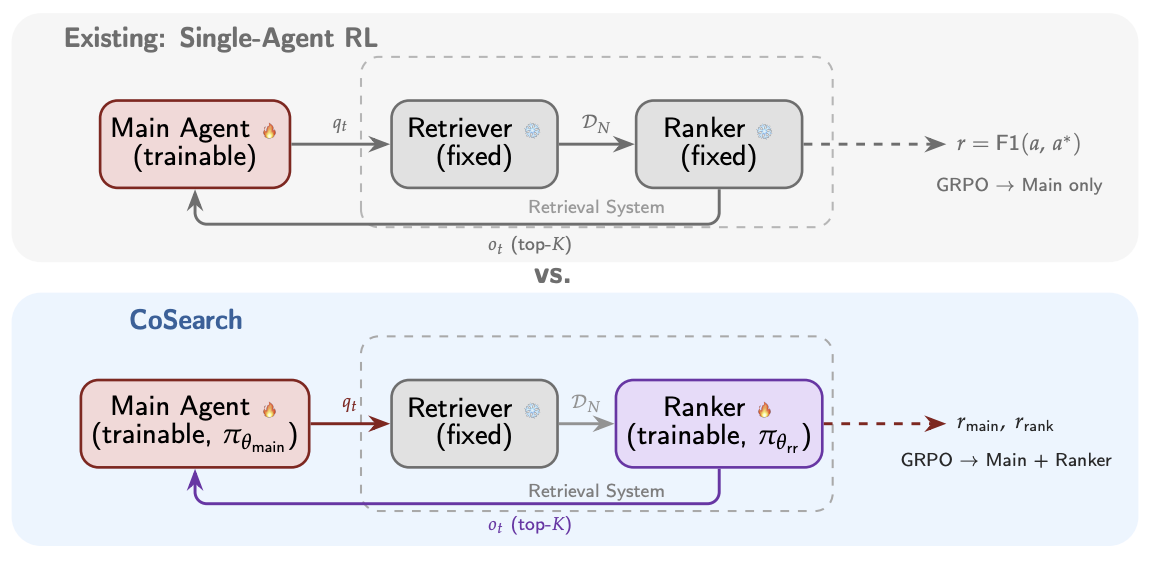

CoSearch: Joint Training of Reasoning and Document Ranking via Reinforcement Learning for Agentic SearchHansi Zeng, Liam Collins, Bhuvesh Kumar, Neil Shah, and Hamed ZamaniarXiv preprint, 2026Agentic search – the task of training agents that iteratively reason, issue queries, and synthesize retrieved information to answer complex questions – has achieved remarkable progress through reinforcement learning (RL). However, existing approaches such as Search-R1, treat the retrieval system as a fixed tool, optimizing only the reasoning agent while the retrieval component remains unchanged. A preliminary experiment reveals that the gap between an oracle and a fixed retrieval system reaches up to +26.8% relative F1 improvement across seven QA benchmarks, suggesting that the retrieval system is a key bottleneck in scaling agentic search performance. Motivated by this finding, we propose CoSearch, a framework that jointly trains a multi-step reasoning agent and a generative document ranking model via Group Relative Policy Optimization (GRPO). To enable effective GRPO training for the ranker – whose inputs vary across reasoning trajectories – we introduce a semantic grouping strategy that clusters sub-queries by token-level similarity, forming valid optimization groups without additional rollouts. We further design a composite reward combining ranking quality signals with trajectory-level outcome feedback, providing the ranker with both immediate and long-term learning signals. Experiments on seven single-hop and multi-hop QA benchmarks demonstrate consistent improvements over strong baselines, with ablation studies validating each design choice. Our results show that joint training of the reasoning agent and retrieval system is both feasible and strongly performant, pointing to a key ingredient for future search agents.

- ACL

Hierarchical Token Prepending: Enhancing Information Flow in Decoder-based LLM EmbeddingsXueying Ding, Xingyue Huang, Clark Ju, Liam Collins, Yozen Liu, Leman Akoglu, Neil Shah, and Tong ZhaoIn Annual Meeting of the Association for Computational Linguistics, 2026

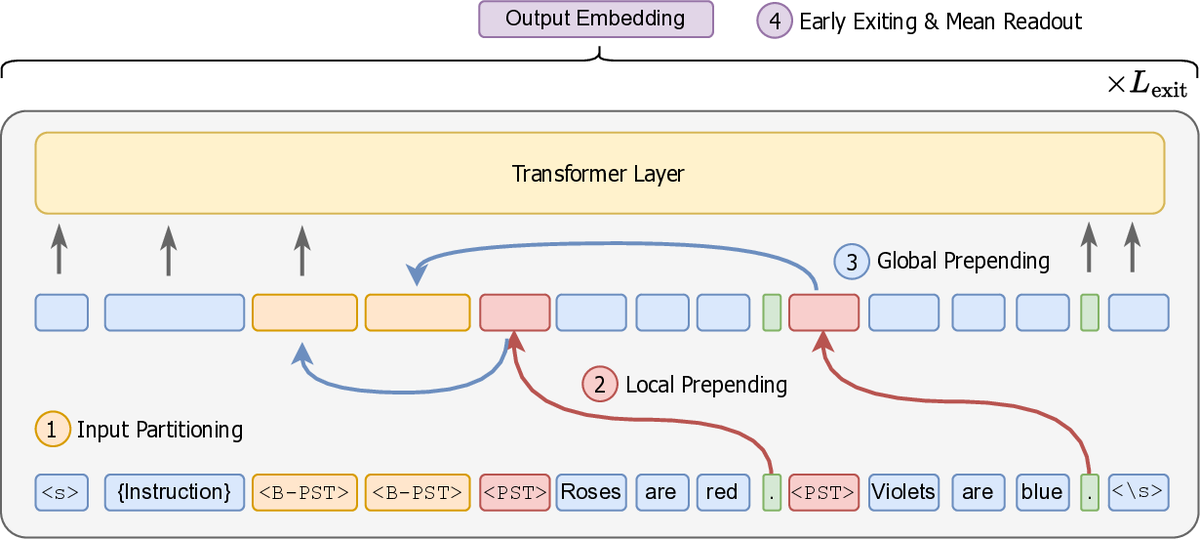

Hierarchical Token Prepending: Enhancing Information Flow in Decoder-based LLM EmbeddingsXueying Ding, Xingyue Huang, Clark Ju, Liam Collins, Yozen Liu, Leman Akoglu, Neil Shah, and Tong ZhaoIn Annual Meeting of the Association for Computational Linguistics, 2026Large language models produce powerful text embeddings, but their causal attention mechanism restricts the flow of information from later to earlier tokens, degrading representation quality. While recent methods attempt to solve this by prepending a single summary token, they over-compress information, hence harming performance on long documents. We propose Hierarchical Token Prepending (HTP), a method that resolves two critical bottlenecks. To mitigate attention-level compression, HTP partitions the input into blocks and prepends block-level summary tokens to subsequent blocks, creating multiple pathways for backward information flow. To address readout-level over-squashing, we replace last-token pooling with mean-pooling, a choice supported by theoretical analysis. HTP achieves consistent performance gains across 11 retrieval datasets and 30 general embedding benchmarks, especially in long-context settings. As a simple, architecture-agnostic method, HTP enhances both zero-shot and finetuned models, offering a scalable route to superior long-document embeddings.

- ACL

Threshold Differential Attention for Sink-Free, Ultra-Sparse, and Non-Dispersive Language ModelingXingyue Huang, Xueying Ding, Mingxuan Ju, Yozen Liu, Neil Shah, and Tong ZhaoIn Annual Meeting of the Association for Computational Linguistics, 2026

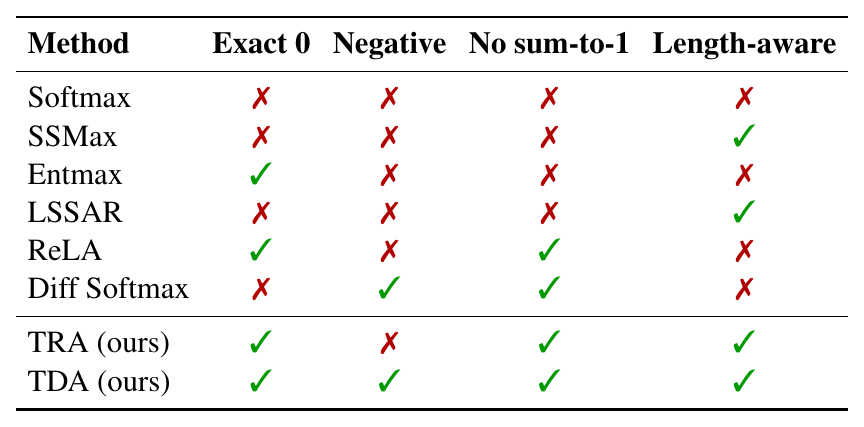

Threshold Differential Attention for Sink-Free, Ultra-Sparse, and Non-Dispersive Language ModelingXingyue Huang, Xueying Ding, Mingxuan Ju, Yozen Liu, Neil Shah, and Tong ZhaoIn Annual Meeting of the Association for Computational Linguistics, 2026Softmax attention struggles with long contexts due to structural limitations: the strict sum-to-one constraint forces attention sinks on irrelevant tokens, and probability mass disperses as sequence lengths increase. We tackle these problems with Threshold Differential Attention (TDA), a sink-free attention mechanism that achieves ultra-sparsity and improved robustness at longer sequence lengths without the computational overhead of projection methods or the performance degradation caused by noise accumulation of standard rectified attention. TDA applies row-wise extreme-value thresholding with a length-dependent gate, retaining only exceedances. Inspired by the differential transformer, TDA also subtracts an inhibitory view to enhance expressivity. Theoretically, we prove that TDA controls the expected number of spurious survivors per row to O(1) and that consensus spurious matches across independent views vanish as context grows. Empirically, TDA produces >99% exact zeros and eliminates attention sinks while maintaining competitive performance on standard and long-context benchmarks.

- SIGIR

Beyond Unimodal Perspectives: Generative Retrieval with Multimodal SemanticsJing Zhu, Mingxuan Ju, Yozen Liu, Shubham Vij, Danai Koutra, Neil Shah, and Tong ZhaoIn ACM SIGIR Conference on Research and Development in Information Retrieval, 2026

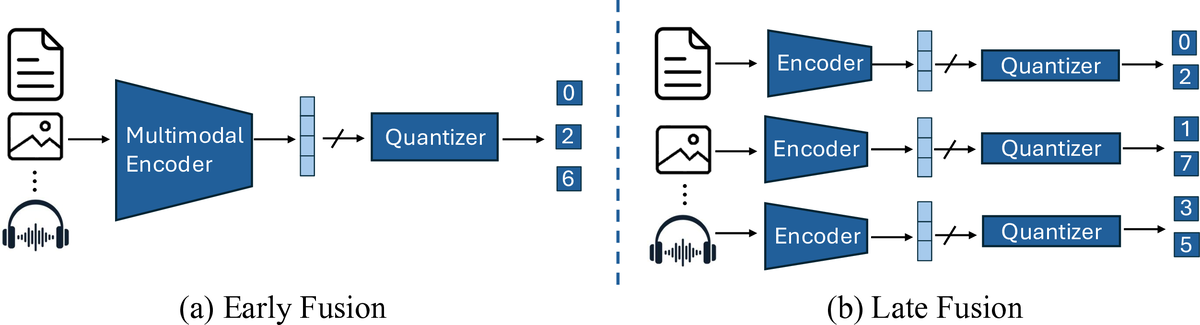

Beyond Unimodal Perspectives: Generative Retrieval with Multimodal SemanticsJing Zhu, Mingxuan Ju, Yozen Liu, Shubham Vij, Danai Koutra, Neil Shah, and Tong ZhaoIn ACM SIGIR Conference on Research and Development in Information Retrieval, 2026Generative recommendation (GR) has become a powerful paradigm in recommendation systems that implicitly links modality and semantics to item representation, in contrast to previous methods that relied on non-semantic item identifiers in autoregressive models. However, previous research has predominantly treated modalities in isolation, typically assuming item content is unimodal (usually text). We argue that this is a significant limitation given the rich, multimodal nature of real-world data and the potential sensitivity of GR models to modality choices and usage. Our work aims to explore the critical problem of Multimodal Generative Recommendation (MGR), highlighting the importance of modality choices in GR nframeworks. We reveal that GR models are particularly sensitive to different modalities and examine the challenges in achieving effective GR when multiple modalities are available. By evaluating design strategies for effectively leveraging multiple modalities, we identify key challenges and introduce MGR-LF++, an enhanced late fusion framework that employs contrastive modality alignment and special tokens to denote different modalities, achieving a performance improvement of over 20% compared to single-modality alternatives.

- SIGIR

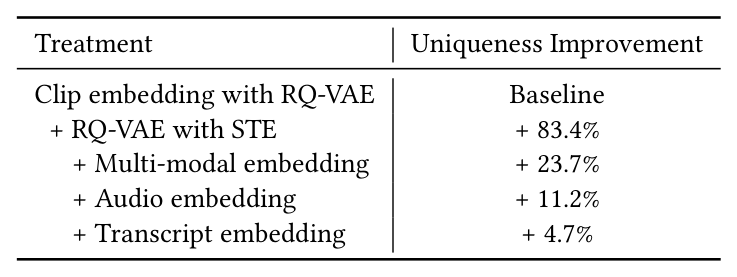

Semantic IDs for Recommender Systems at Snapchat: Use Cases, Technical Challenges, and Design ChoicesClark Mingxuan Ju, Tong Zhao, Leonardo Neves, Liam Collins, Bhuvesh Kumar, Jiwen Ren, Lili Zhang, Wenfeng Zhuo, and 10 more authorsIn ACM SIGIR Conference on Research and Development in Information Retrieval, 2026

Semantic IDs for Recommender Systems at Snapchat: Use Cases, Technical Challenges, and Design ChoicesClark Mingxuan Ju, Tong Zhao, Leonardo Neves, Liam Collins, Bhuvesh Kumar, Jiwen Ren, Lili Zhang, Wenfeng Zhuo, and 10 more authorsIn ACM SIGIR Conference on Research and Development in Information Retrieval, 2026Effective item identifiers (IDs) are an important component for recommender systems (RecSys) in practice, and are commonly adopted in many use cases such as retrieval and ranking. IDs can encode collaborative filtering signals within training data, such that RecSys models can extrapolate during the inference and personalize the prediction based on users’ behavioral histories. Recently, Semantic IDs (SIDs) have become a trending paradigm for RecSys. In comparison to the conventional atomic ID, an SID is an ordered list of codes, derived from tokenizers such as residual quantization, applied to semantic representations commonly extracted from foundation models or collaborative signals. SIDs have drastically smaller cardinality than the atomic counterpart, and induce semantic clustering in the ID space. At Snapchat, we apply SIDs as auxiliary features for ranking models, and also explore SIDs as additional retrieval sources in different ML applications. In this paper, we discuss practical technical challenges we encountered while applying SIDs, experiments we have conducted, and design choices we have iterated to mitigate these challenges. Backed by promising offline results on both internal data and academic benchmarks as well as online A/B studies, SID variants have been launched in multiple production models with positive metrics impact.

- ICML

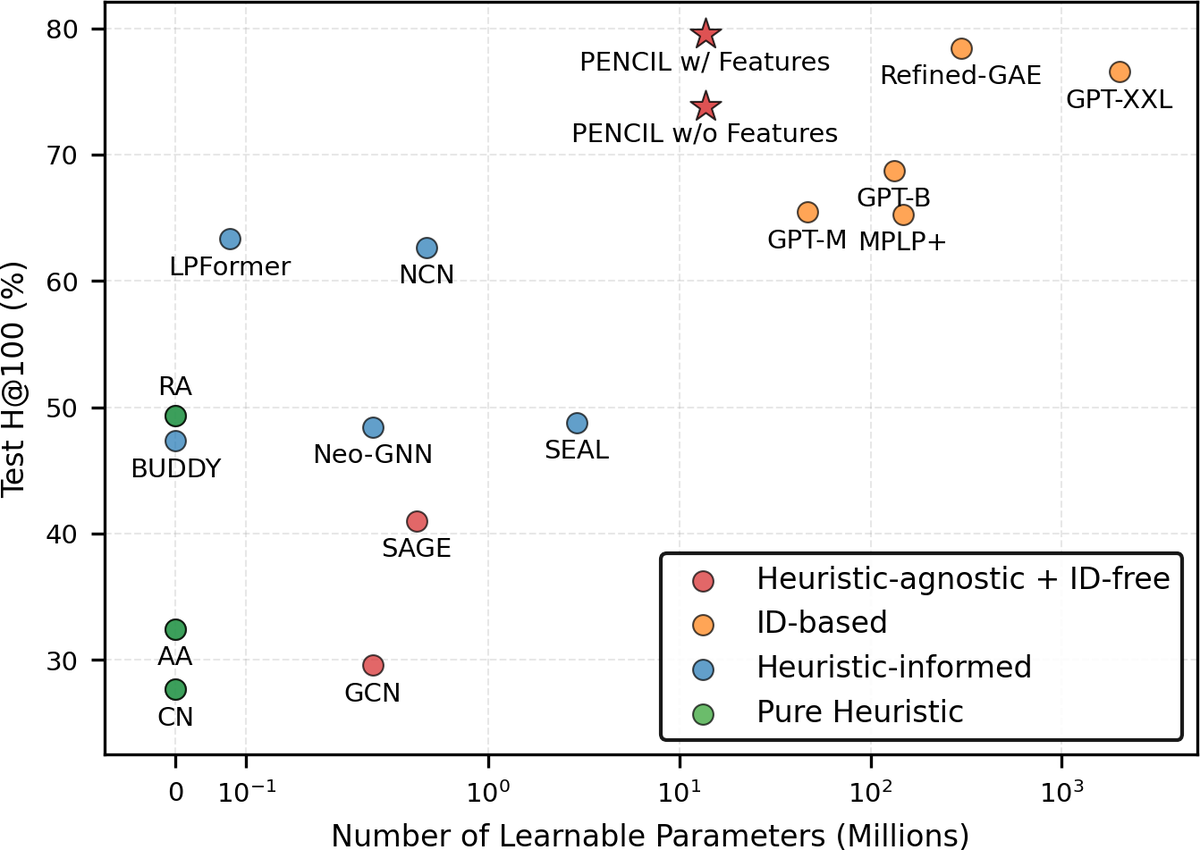

Plain Transformers are Surprisingly Powerful Link PredictorsQuang Truong, Yu Song, Donald Loveland, Clark Ju, Tong Zhao, Neil Shah, and Jiliang TangIn International Conference on Machine Learning, 2026

Plain Transformers are Surprisingly Powerful Link PredictorsQuang Truong, Yu Song, Donald Loveland, Clark Ju, Tong Zhao, Neil Shah, and Jiliang TangIn International Conference on Machine Learning, 2026Link prediction is a core challenge in graph machine learning, demanding models that capture rich and complex topological dependencies. While Graph Neural Networks (GNNs) are the standard solution, state-of-the-art pipelines often rely on explicit structural heuristics or memory-intensive node embeddings – approaches that struggle to generalize or scale to massive graphs. Emerging Graph Transformers (GTs) offer a potential alternative but often incur significant overhead due to complex structural encodings, hindering their applications to large-scale link prediction. We challenge these sophisticated paradigms with PENCIL, an encoder-only plain Transformer that replaces hand-crafted priors with attention over sampled local subgraphs, retaining the scalability and hardware efficiency of standard Transformers. Through experimental and theoretical analysis, we show that PENCIL extracts richer structural signals than GNNs, implicitly generalizing a broad class of heuristics and subgraph-based expressivity. Empirically, PENCIL outperforms heuristic-informed GNNs and is far more parameter-efficient than ID-embedding–based alternatives, while remaining competitive across diverse benchmarks – even without node features. Our results challenge the prevailing reliance on complex engineering techniques, demonstrating that simple design choices are potentially sufficient to achieve the same capabilities.

- preprint

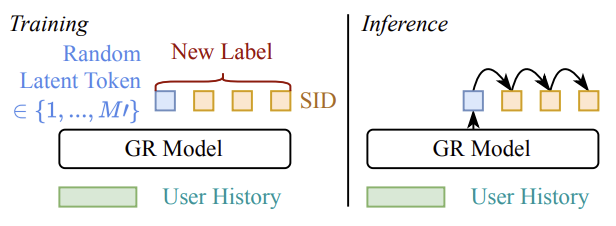

Expressiveness Limits of Autoregressive Semantic ID Generation in Generative RecommendationYupeng Hou, Haven Kim, Clark Ju, Eduardo Escoto, Neil Shah, and Julian McAuleyarXiv preprint, 2026

Expressiveness Limits of Autoregressive Semantic ID Generation in Generative RecommendationYupeng Hou, Haven Kim, Clark Ju, Eduardo Escoto, Neil Shah, and Julian McAuleyarXiv preprint, 2026Generative recommendation (GR) models generate items by autoregressively producing a sequence of discrete tokens that jointly index the target item. However, this autoregressive generation process also induces a structured decoding space whose impact on model expressiveness remains underexplored. Specifically, token-by-token generation can be viewed as traversing a decoding tree induced by semantic ID tokens, where leaf nodes correspond to candidate items. We observe that the item probabilities produced by GR models are strongly correlated with this tree structure: items that are close in the tree tend to receive similar probabilities for any given user, making it difficult to distinguish among them based on user-specific preferences. We further show theoretically that such structural correlations prevent GR models from representing even simple patterns that can be well captured by conventional collaborative filtering models. To mitigate this issue, we propose Latte, a simple modification that injects a latent token before each semantic ID, reshaping the decoding space from a single tree into multiple latent-token-conditioned trees. This design creates multiple paths with varying tree distances between items, relaxing tree-induced probability coupling and yielding an average of 3.45% relative improvement on NDCG@10. Our code is available at https://github.com/hyp1231/Latte.

- preprint

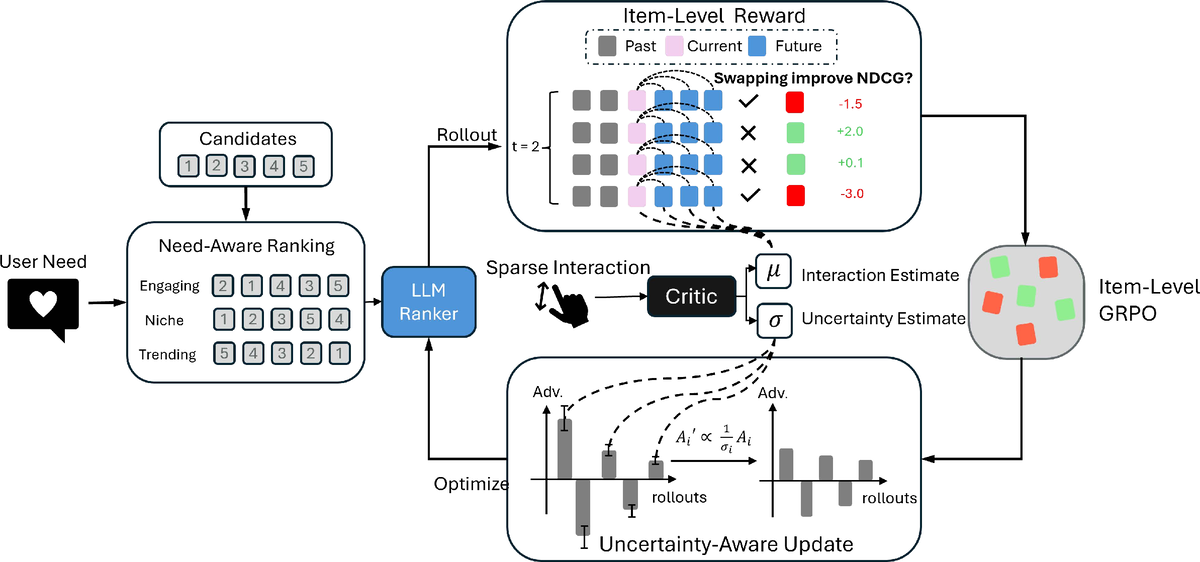

FlexRec: Adapting LLM-based Recommenders for Flexible Needs via Reinforcement LearningYijun Pan, Weikang Qiu, Qiyao Ma, Mingxuan Ju, Tong Zhao, Neil Shah, and Rex YingPreprint, 2026

FlexRec: Adapting LLM-based Recommenders for Flexible Needs via Reinforcement LearningYijun Pan, Weikang Qiu, Qiyao Ma, Mingxuan Ju, Tong Zhao, Neil Shah, and Rex YingPreprint, 2026Modern recommender systems must adapt to dynamic, need-specific objectives for diverse recommendation scenarios, yet most traditional recommenders are optimized for a single static target and struggle to reconfigure behavior on demand. Recent advances in reinforcement-learning-based post-training have unlocked strong instruction-following and reasoning capabilities in LLMs, suggesting a principled route for aligning them to complex recommendation goals. Motivated by this, we study closed-set autoregressive ranking, where an LLM generates a permutation over a fixed candidate set conditioned on user context and an explicit need instruction. However, applying RL to this setting faces two key obstacles: (i) sequence-level rewards yield coarse credit assignment that fails to provide fine-grained training signals, and (ii) interaction feedback is sparse and noisy, which together lead to inefficient and unstable updates. We propose FlexRec, a post-training RL framework that addresses both issues with (1) a causally grounded item-level reward based on counterfactual swaps within the remaining candidate pool, and (2) critic-guided, uncertainty-aware scaling that explicitly models reward uncertainty and down-weights low-confidence rewards to stabilize learning under sparse supervision. Across diverse recommendation scenarios and objectives, FlexRec achieves substantial gains: it improves NDCG@5 by up to \textbf{59%} and Recall@5 by up to \textbf{109.4%} in need-specific ranking, and further achieves up to \textbf{24.1%} Recall@5 improvement under generalization settings, outperforming strong traditional recommenders and LLM-based baselines.

- WSDM

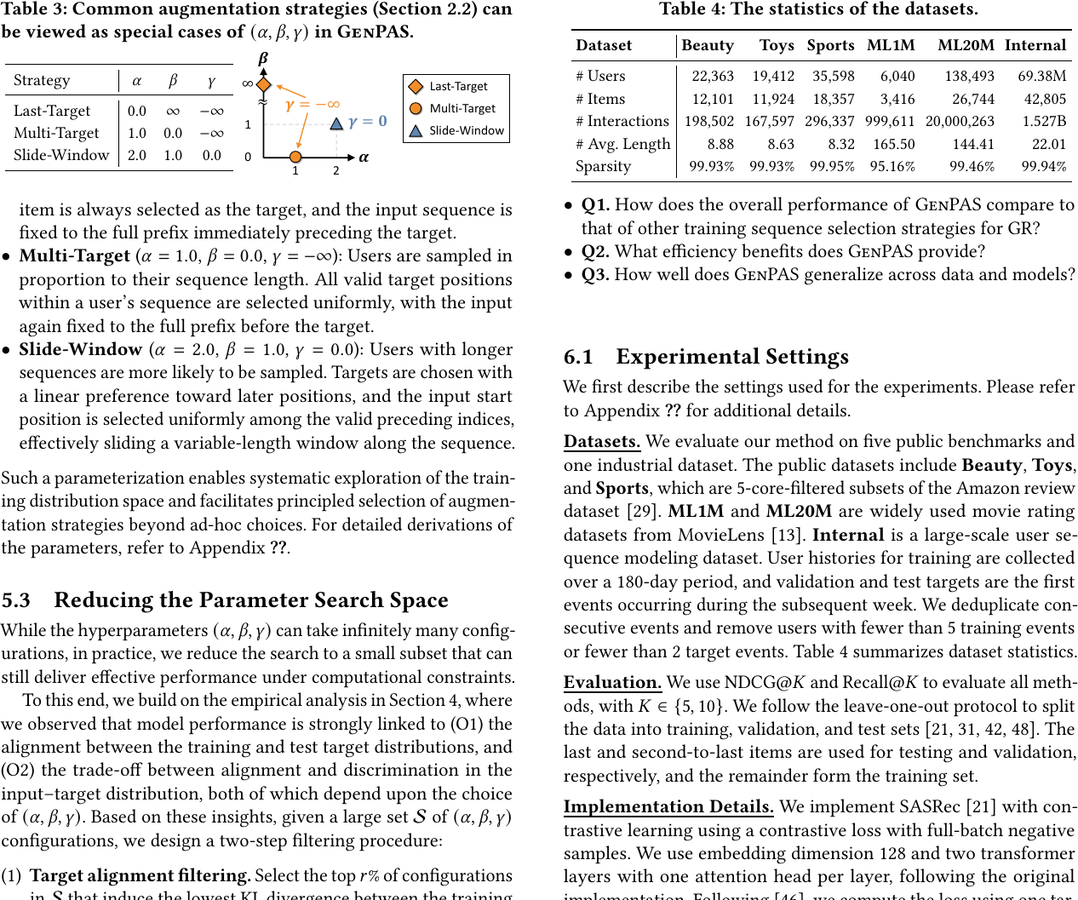

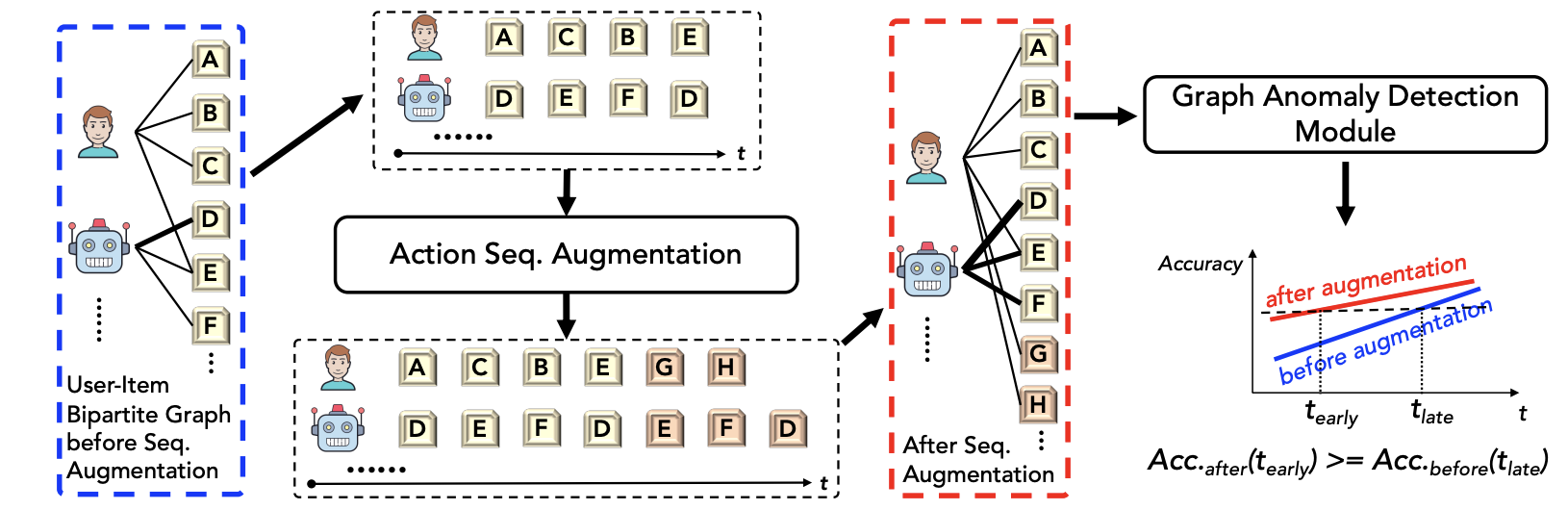

Sequential Data Augmentation for Generative RecommendationGeon Lee, Bhuvesh Kumar, Clark Ju, Tong Zhao, Kijung Shin, Neil Shah, and Liam CollinsIn ACM International Conference on Web Search and Data Mining, 2026

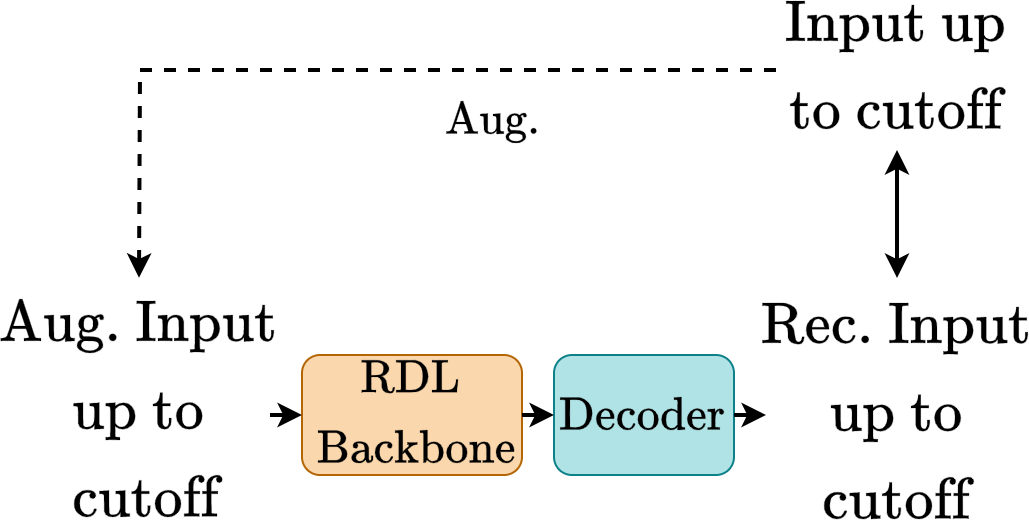

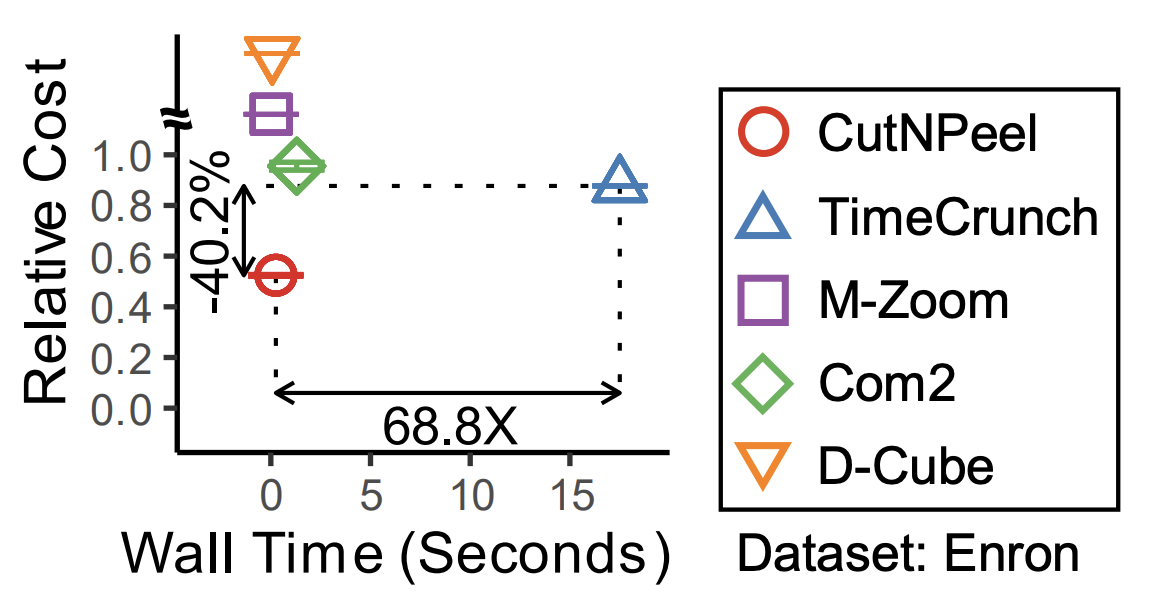

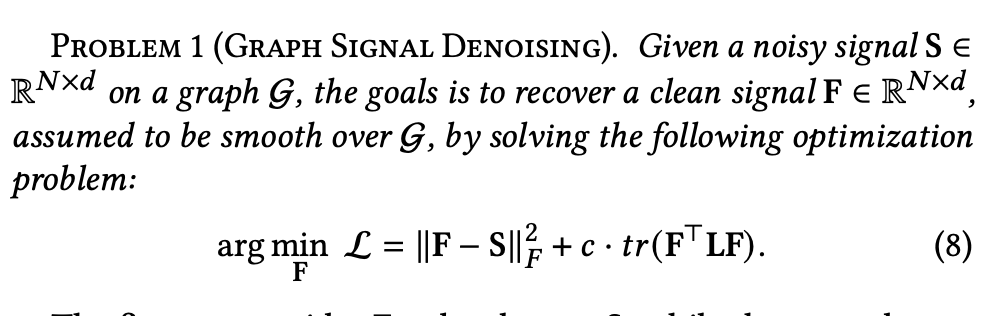

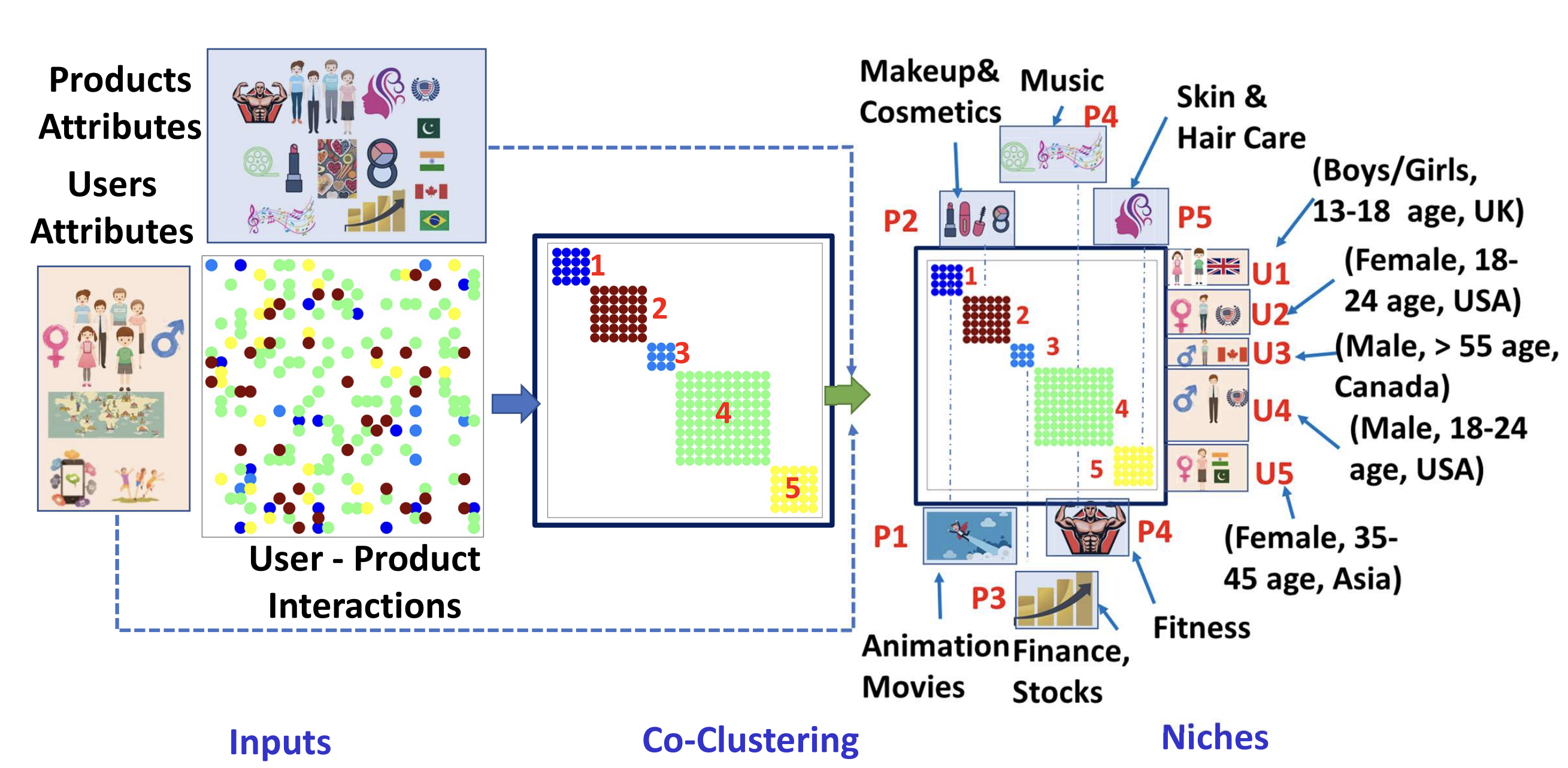

Sequential Data Augmentation for Generative RecommendationGeon Lee, Bhuvesh Kumar, Clark Ju, Tong Zhao, Kijung Shin, Neil Shah, and Liam CollinsIn ACM International Conference on Web Search and Data Mining, 2026Generative recommendation plays a crucial role in personalized systems, predicting users’ future interactions from their historical behavior sequences. A critical yet underexplored factor in training these models is data augmentation, the process of constructing training data from user interaction histories. By shaping the training distribution, data augmentation directly and often substantially affects model generalization and performance. Nevertheless, in much of the existing work, this process is simplified, applied inconsistently, or treated as a minor design choice, without a systematic and principled understanding of its effects. Motivated by our empirical finding that different augmentation strategies can yield large performance disparities, we conduct an in-depth analysis of how they reshape training distributions and influence alignment with future targets and generalization to unseen inputs. To systematize this design space, we propose GenPAS, a generalized and principled framework that models augmentation as a stochastic sampling process over input-target pairs with three bias-controlled steps: sequence sampling, target sampling, and input sampling. This formulation unifies widely used strategies as special cases and enables flexible control of the resulting training distribution. Our extensive experiments on benchmark and industrial datasets demonstrate that GenPAS yields superior accuracy, data efficiency, and parameter efficiency compared to existing strategies, providing practical guidance for principled training data construction in generative recommendation. Our code is available at https://github.com/snap-research/GenPAS.

- ACL

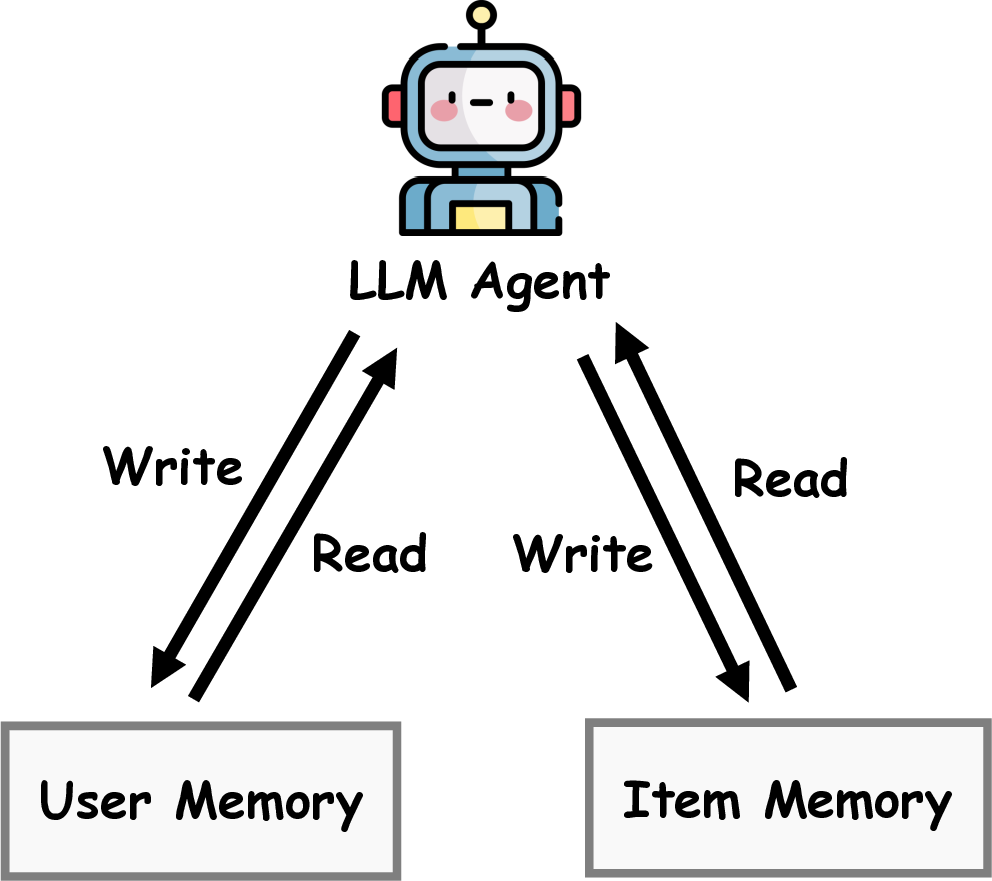

MemRec: Collaborative Memory-Augmented Agentic Recommender SystemWeixin Chen, Yuhan Zhao, Jingyuan Huang, Zihe Ye, Clark Mingxuan Ju, Tong Zhao, Neil Shah, Li Chen, and 1 more authorIn Annual Meeting of the Association for Computational Linguistics, 2026

MemRec: Collaborative Memory-Augmented Agentic Recommender SystemWeixin Chen, Yuhan Zhao, Jingyuan Huang, Zihe Ye, Clark Mingxuan Ju, Tong Zhao, Neil Shah, Li Chen, and 1 more authorIn Annual Meeting of the Association for Computational Linguistics, 2026The evolution of recommender systems has shifted preference storage from rating matrices and dense embeddings to semantic memory in the agentic era. Yet existing agents rely on isolated memory, overlooking crucial collaborative signals. Bridging this gap is hindered by the dual challenges of distilling vast graph contexts without overwhelming reasoning agents with cognitive load, and evolving the collaborative memory efficiently without incurring prohibitive computational costs. To address this, we propose MemRec, a framework that architecturally decouples reasoning from memory management to enable efficient collaborative augmentation. MemRec introduces a dedicated, cost-effective LM_Mem to manage a dynamic collaborative memory graph, serving synthesized, high-signal context to a downstream LLM_Rec. The framework operates via a practical pipeline featuring efficient retrieval and cost-effective asynchronous graph propagation that evolves memory in the background. Extensive experiments on four benchmarks demonstrate that MemRec achieves state-of-the-art performance. Furthermore, architectural analysis confirms its flexibility, establishing a new Pareto frontier that balances reasoning quality, cost, and privacy through support for diverse deployments, including local open-source models. Code:https://github.com/rutgerswiselab/memrec and Homepage: https://memrec.weixinchen.com

- KDD

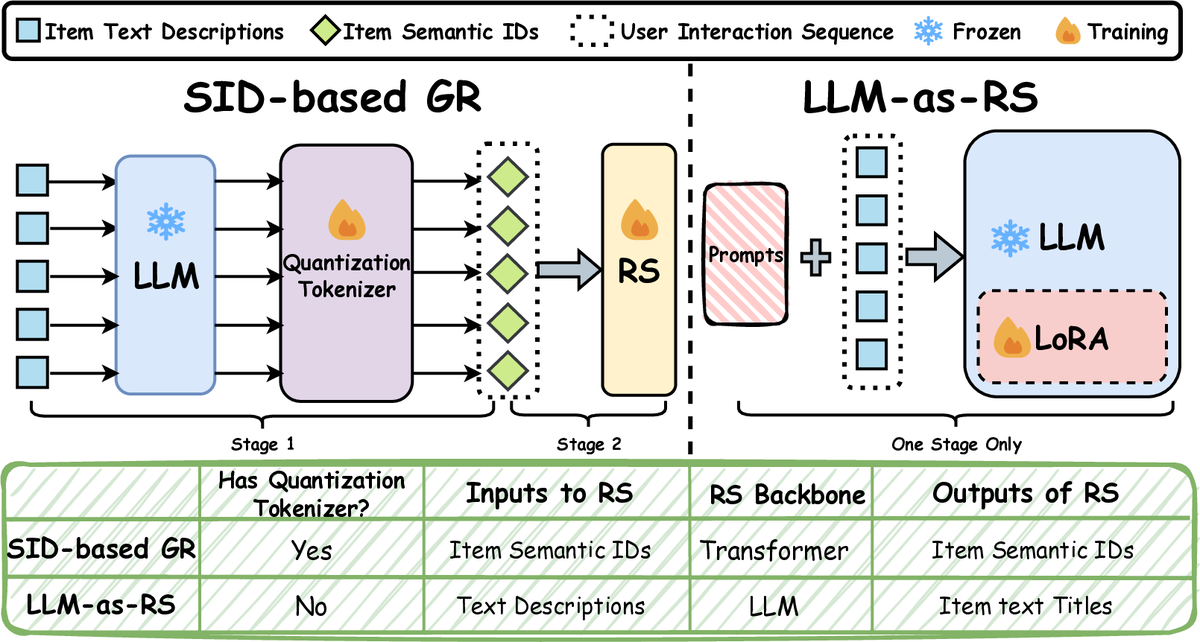

Understanding Generative Recommendation with Semantic IDs from a Model Scaling ViewJingzhe Liu, Liam Collins, Jiliang Tang, Tong Zhao, Neil Shah, and Clark JuIn ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2026

Understanding Generative Recommendation with Semantic IDs from a Model Scaling ViewJingzhe Liu, Liam Collins, Jiliang Tang, Tong Zhao, Neil Shah, and Clark JuIn ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2026Recent advancements in generative models have allowed the emergence of a promising paradigm for recommender systems (RS), known as Generative Recommendation (GR), which tries to unify rich item semantics and collaborative filtering signals. One popular modern approach is to use semantic IDs (SIDs), which are discrete codes quantized from the embeddings of modality encoders (e.g., large language or vision models), to represent items in an autoregressive user interaction sequence modeling setup (henceforth, SID-based GR). While generative models in other domains exhibit well-established scaling laws, our work reveals that SID-based GR shows significant bottlenecks while scaling up the model. In particular, the performance of SID-based GR quickly saturates as we enlarge each component: the modality encoder, the quantization tokenizer, and the RS itself. In this work, we identify the limited capacity of SIDs to encode item semantic information as one of the fundamental bottlenecks. Motivated by this observation, as an initial effort to obtain GR models with better scaling behaviors, we revisit another GR paradigm that directly uses large language models (LLMs) as recommenders (henceforth, LLM-as-RS). Our experiments show that the LLM-as-RS paradigm has superior model scaling properties and achieves up to 20 percent improvement over the best achievable performance of SID-based GR through scaling. We also challenge the prevailing belief that LLMs struggle to capture collaborative filtering information, showing that their ability to model user-item interactions improves as LLMs scale up. Our analyses on both SID-based GR and LLMs across model sizes from 44M to 14B parameters underscore the intrinsic scaling limits of SID-based GR and position LLM-as-RS as a promising path toward foundation models for GR.

2025

- preprint

Masked Diffusion for Generative RecommendationKulin Shah, Bhuvesh Kumar, Neil Shah, and Liam CollinsarXiv preprint, 2025

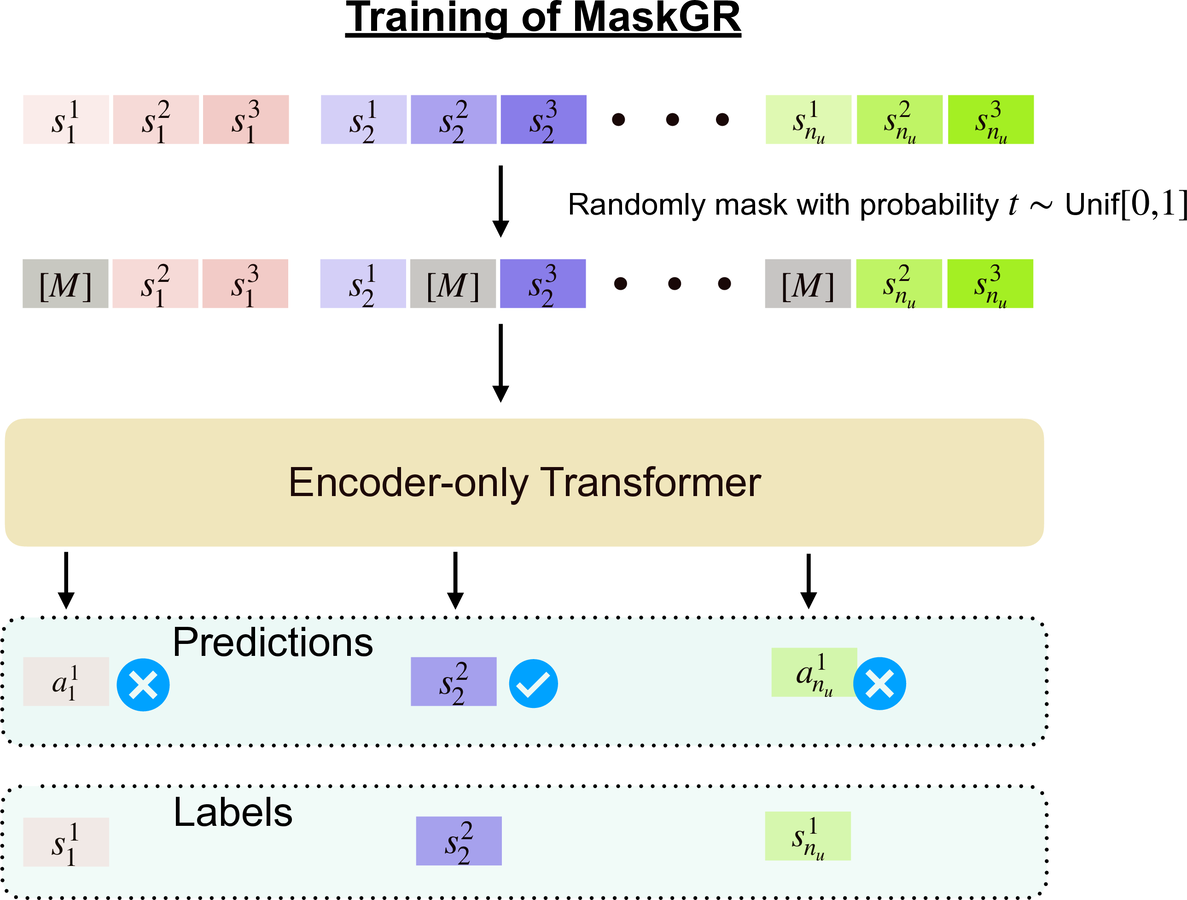

Masked Diffusion for Generative RecommendationKulin Shah, Bhuvesh Kumar, Neil Shah, and Liam CollinsarXiv preprint, 2025Generative recommendation (GR) with semantic IDs (SIDs) has emerged as a promising alternative to traditional recommendation approaches due to its performance gains, capitalization on semantic information provided through language model embeddings, and inference and storage efficiency. Existing GR with SIDs works frame the probability of a sequence of SIDs corresponding to a user’s interaction history using autoregressive modeling. While this has led to impressive next item prediction performances in certain settings, these autoregressive GR with SIDs models suffer from expensive inference due to sequential token-wise decoding, potentially inefficient use of training data and bias towards learning short-context relationships among tokens. Inspired by recent breakthroughs in NLP, we propose to instead model and learn the probability of a user’s sequence of SIDs using masked diffusion. Masked diffusion employs discrete masking noise to facilitate learning the sequence distribution, and models the probability of masked tokens as conditionally independent given the unmasked tokens, allowing for parallel decoding of the masked tokens. We demonstrate through thorough experiments that our proposed method consistently outperforms autoregressive modeling. This performance gap is especially pronounced in data-constrained settings and in terms of coarse-grained recall, consistent with our intuitions. Moreover, our approach allows the flexibility of predicting multiple SIDs in parallel during inference while maintaining superior performance to autoregressive modeling.

- preprint

Exploiting ID-Text Complementarity via Ensembling for Sequential RecommendationLiam Collins, Bhuvesh Kumar, Clark Ju, Tong Zhao, Donald Loveland, Leonardo Neves, and Neil ShaharXiv preprint, 2025

Exploiting ID-Text Complementarity via Ensembling for Sequential RecommendationLiam Collins, Bhuvesh Kumar, Clark Ju, Tong Zhao, Donald Loveland, Leonardo Neves, and Neil ShaharXiv preprint, 2025Modern Sequential Recommendation (SR) models commonly utilize modality features to represent items, motivated in large part by recent advancements in language and vision modeling. To do so, several works completely replace ID embeddings with modality embeddings, claiming that modality embeddings render ID embeddings unnecessary because they can match or even exceed ID embedding performance. On the other hand, many works jointly utilize ID and modality features, but posit that complex fusion strategies, such as multi-stage training and/or intricate alignment architectures, are necessary for this joint utilization. However, underlying both these lines of work is a lack of understanding of the complementarity of ID and modality features. In this work, we address this gap by studying the complementarity of ID- and text-based SR models. We show that these models do learn complementary signals, meaning that either should provide performance gain when used properly alongside the other. Motivated by this, we propose a new SR method that preserves ID-text complementarity through independent model training, then harnesses it through a simple ensembling strategy. Despite this method’s simplicity, we show it outperforms several competitive SR baselines, implying that both ID and text features are necessary to achieve state-of-the-art SR performance but complex fusion architectures are not.

- CIKM

Pretrained Language Model based Cold-Start Recommendation with Meta-Item EmbeddingsZaiyi Zheng, Yaochen Zhu, Haochen Liu, Clark Ju, Tong Zhao, Neil Shah, and Jundong LiIn ACM International Conference on Information and Knowledge Management, 2025

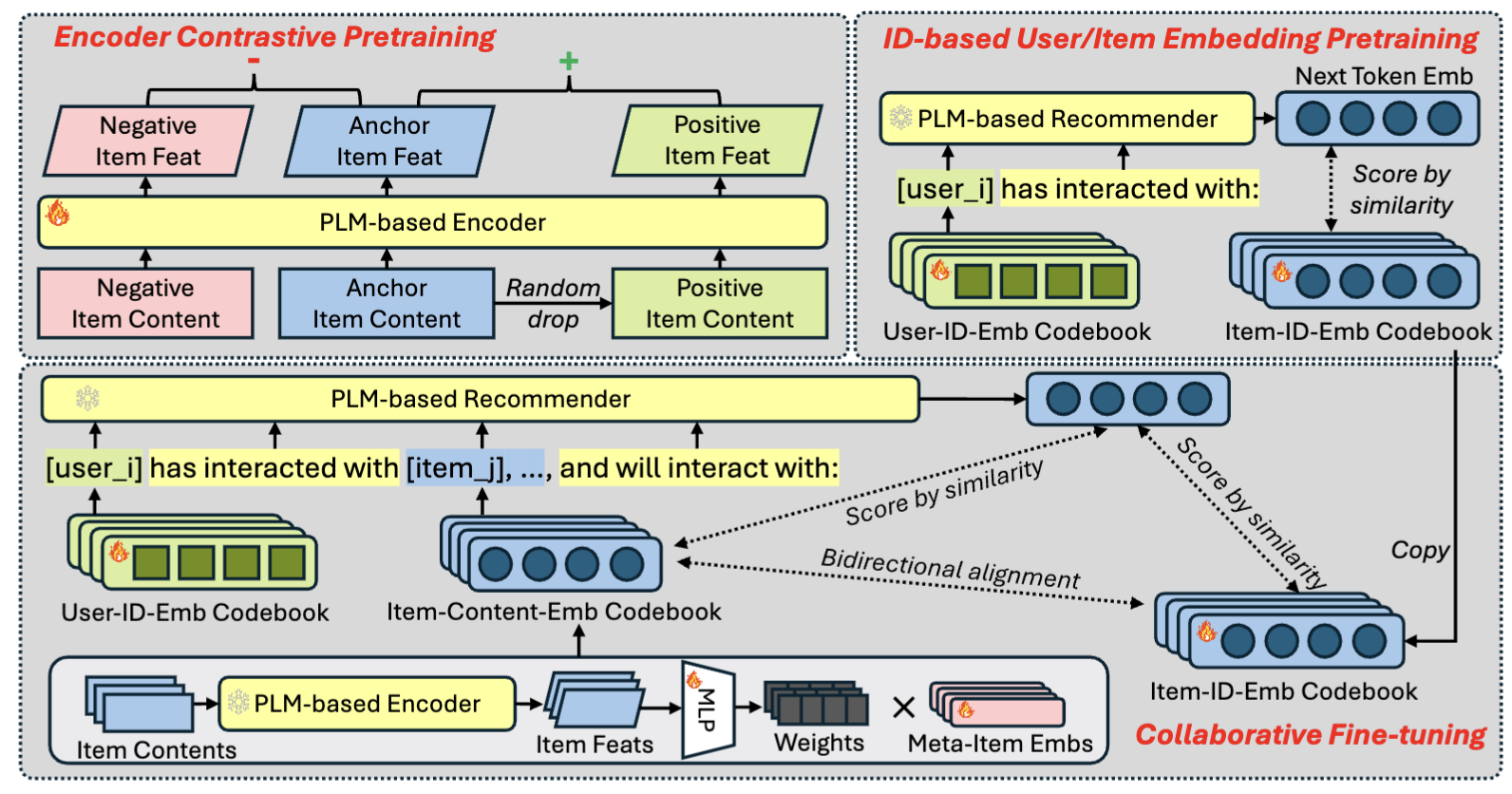

Pretrained Language Model based Cold-Start Recommendation with Meta-Item EmbeddingsZaiyi Zheng, Yaochen Zhu, Haochen Liu, Clark Ju, Tong Zhao, Neil Shah, and Jundong LiIn ACM International Conference on Information and Knowledge Management, 2025Recently, pretrained large language models (LLMs) have been widely adopted in recommendation systems to leverage their textual understanding and reasoning abilities to model user behaviors and suggest future items. A key challenge in this setting is that items on most platforms are not included in the LLM’s training data. Therefore, existing methods often fine-tune LLMs by introducing auxiliary item tokens to capture item semantics. However, in real-world applications such as e-commerce and short video platforms, the item space evolves rapidly, which gives rise to a cold-start setting, where many newly introduced items receive little or even no user engagement. This poses challenges in both learning accurate item token embeddings and generalizing efficiently to accommodate the continual influx of new items. In this work, we propose a novel meta-item token learning strategy to address both these challenges simultaneously. Specifically, we introduce MI4Rec, an LLM-based approach for recommendation that uses just a few learnable meta-item tokens and an LLM encoder to dynamically aggregate meta-items based on item content. We show that this paradigm allows highly efficient and accurate learning in such challenging settings. Extensive experiments on Yelp and Amazon reviews datasets demonstrate the effectiveness of MI4Rec in both warm-start and cold-start recommendations. Notably, MI4Rec achieves an average performance improvement of 20.4% in Recall and NDCG compared to the best-performing baselines. The implementation of MI4Rec is available at https://github.com/zhengzaiyi/MI4Rec.

- CIKM

Generative Recommendation with Semantic IDs: A Practitioner’s HandbookClark Ju, Liam Collins, Leonardo Neves, Bhuvesh Kumar, Louis Wang, Tong Zhao, and Neil ShahIn ACM International Conference on Information and Knowledge Management, 2025

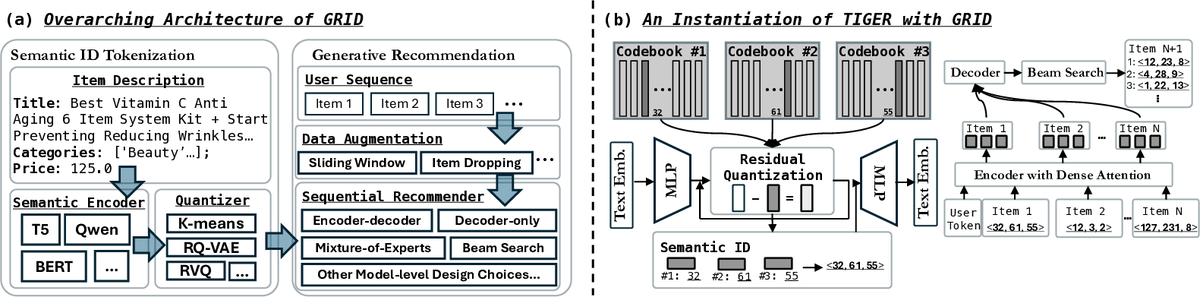

Generative Recommendation with Semantic IDs: A Practitioner’s HandbookClark Ju, Liam Collins, Leonardo Neves, Bhuvesh Kumar, Louis Wang, Tong Zhao, and Neil ShahIn ACM International Conference on Information and Knowledge Management, 2025Generative recommendation (GR) has gained increasing attention for its promising performance compared to traditional models. A key factor contributing to the success of GR is the semantic ID (SID), which converts continuous semantic representations (e.g., from large language models) into discrete ID sequences. This enables GR models with SIDs to both incorporate semantic information and learn collaborative filtering signals, while retaining the benefits of discrete decoding. However, varied modeling techniques, hyper-parameters, and experimental setups in existing literature make direct comparisons between GR proposals challenging. Furthermore, the absence of an open-source, unified framework hinders systematic benchmarking and extension, slowing model iteration. To address this challenge, our work introduces and open-sources a framework for Generative Recommendation with semantic ID, namely GRID, specifically designed for modularity to facilitate easy component swapping and accelerate idea iteration. Using GRID, we systematically experiment with and ablate different components of GR models with SIDs on public benchmarks. Our comprehensive experiments with GRID reveal that many overlooked architectural components in GR models with SIDs substantially impact performance. This offers both novel insights and validates the utility of an open-source platform for robust benchmarking and GR research advancement. GRID is open-sourced at https://github.com/snap-research/GRID.

- NeurIPS

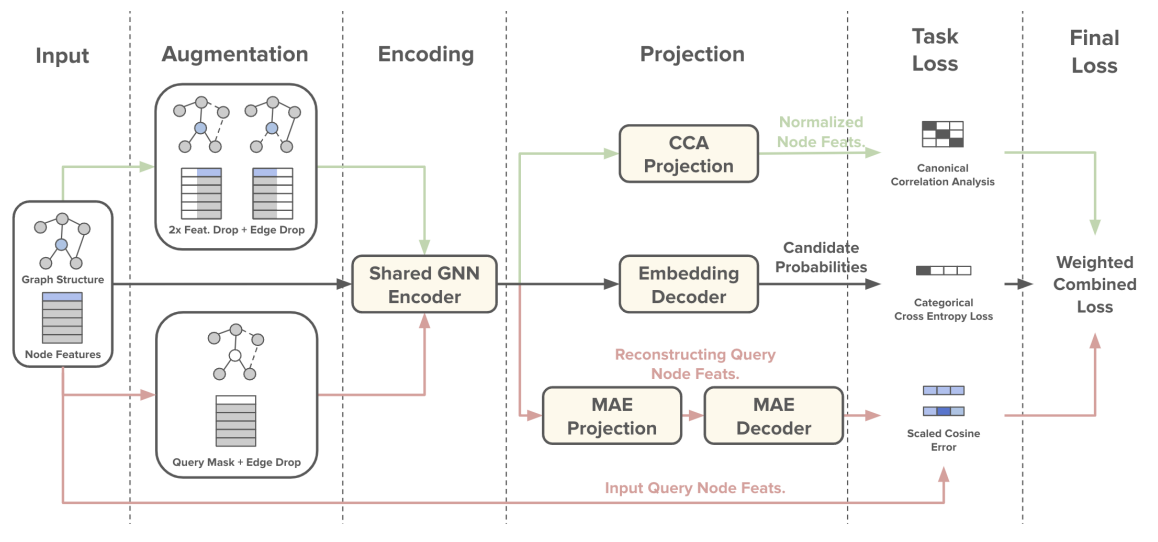

A Pre-Training Framework for Relational Data with Information Theoretic PrinciplesQuang Truong, Zhikai Chen, Clark Ju, Tong Zhao, Neil Shah, and Jiliang TangIn Conference on Neural Information Processing Systems, 2025

A Pre-Training Framework for Relational Data with Information Theoretic PrinciplesQuang Truong, Zhikai Chen, Clark Ju, Tong Zhao, Neil Shah, and Jiliang TangIn Conference on Neural Information Processing Systems, 2025Relational databases underpin critical infrastructure across a wide range of domains, yet the design of generalizable pre-training strategies for learning from relational databases remains an open challenge due to task heterogeneity. Specifically, there exist many possible downstream tasks, as tasks are defined based on relational schema graphs, temporal dependencies, and SQL-defined label logics. An effective pre-training framework is desired to take these factors into account in order to obtain task-aware representations. By incorporating knowledge of the underlying distribution that drives label generation, downstream tasks can benefit from relevant side-channel information. To bridge this gap, we introduce Task Vector Estimation (TVE), a novel pre-training framework that constructs predictive supervisory signals via set-based aggregation over schema traversal graphs, explicitly modeling next-window relational dynamics. We formalize our approach through an information-theoretic lens, demonstrating that task-informed representations retain more relevant signals than those obtained without task priors. Extensive experiments on the RelBench benchmark show that TVE consistently outperforms traditional pre-training baselines. Our findings advocate for pre-training objectives that encode task heterogeneity and temporal structure as design principles for predictive modeling on relational databases. Our code is publicly available at https://github.com/quang-truong/task-vector-estimation.

- LoG

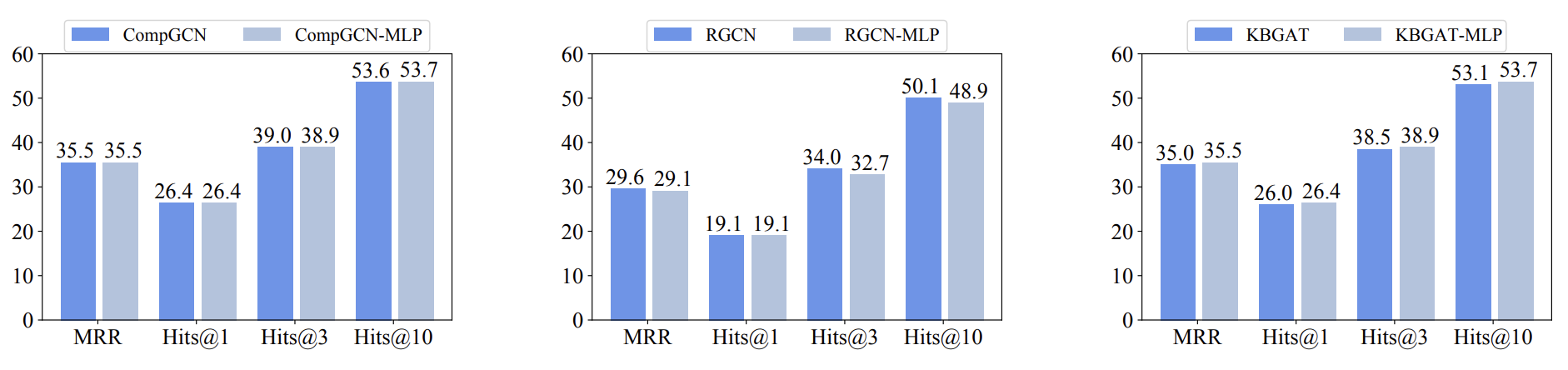

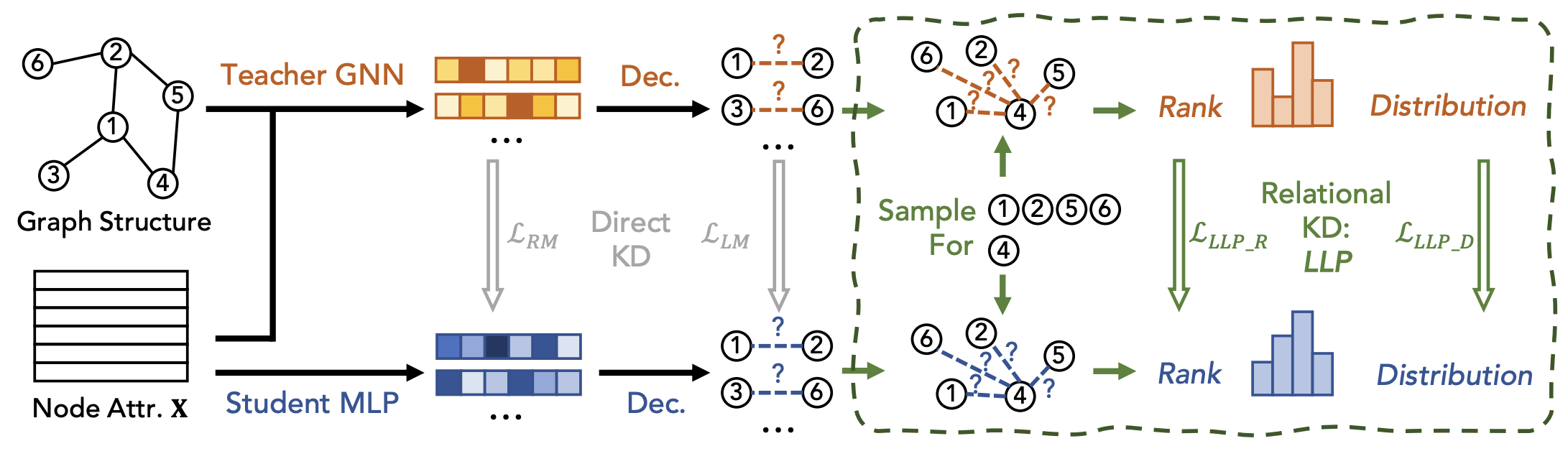

Weak Models Can be Good Teachers: A Case Study on Link Prediction with MLPsZongyue Qin, Shichang Zhang, Clark Ju, Tong Zhao, Neil Shah, and Yizhou SunIn Learning on Graphs, 2025

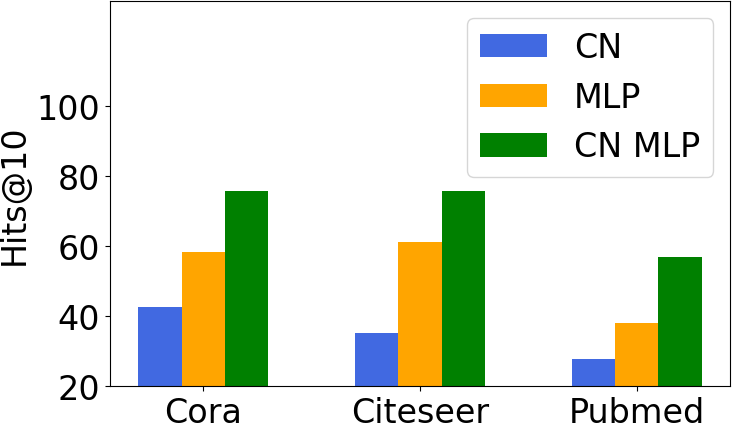

Weak Models Can be Good Teachers: A Case Study on Link Prediction with MLPsZongyue Qin, Shichang Zhang, Clark Ju, Tong Zhao, Neil Shah, and Yizhou SunIn Learning on Graphs, 2025Link prediction is a crucial graph-learning task with applications including citation prediction and product recommendation. Distilling Graph Neural Networks (GNNs) teachers into Multi-Layer Perceptrons (MLPs) students has emerged as an effective approach to achieve strong performance and reducing computational cost by removing graph dependency. However, existing distillation methods only use standard GNNs and overlook alternative teachers such as specialized model for link prediction (GNN4LP) and heuristic methods (e.g., common neighbors). This paper first explores the impact of different teachers in GNN-to-MLP distillation. Surprisingly, we find that stronger teachers do not always produce stronger students: MLPs distilled from GNN4LP can underperform those distilled from simpler GNNs, while weaker heuristic methods can teach MLPs to near-GNN performance with drastically reduced training costs. Building on these insights, we propose Ensemble Heuristic-Distilled MLPs (EHDM), which eliminates graph dependencies while effectively integrating complementary signals via a gating mechanism. Experiments on ten datasets show an average 7.93% improvement over previous GNN-to-MLP approaches with 1.95-3.32 times less training time, indicating EHDM is an efficient and effective link prediction method. Our code is available at https://github.com/ZongyueQin/EHDM

- TMLR

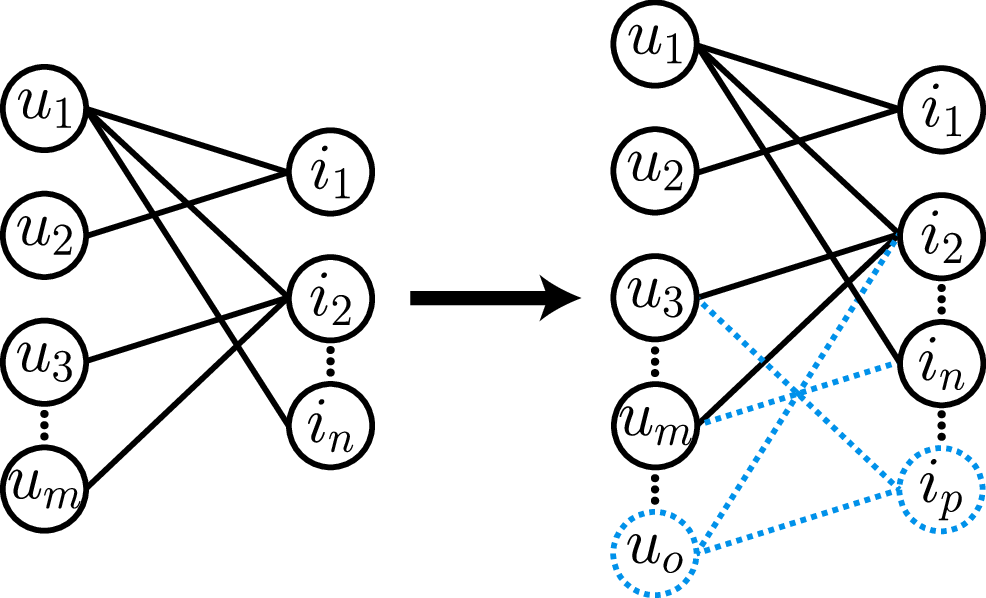

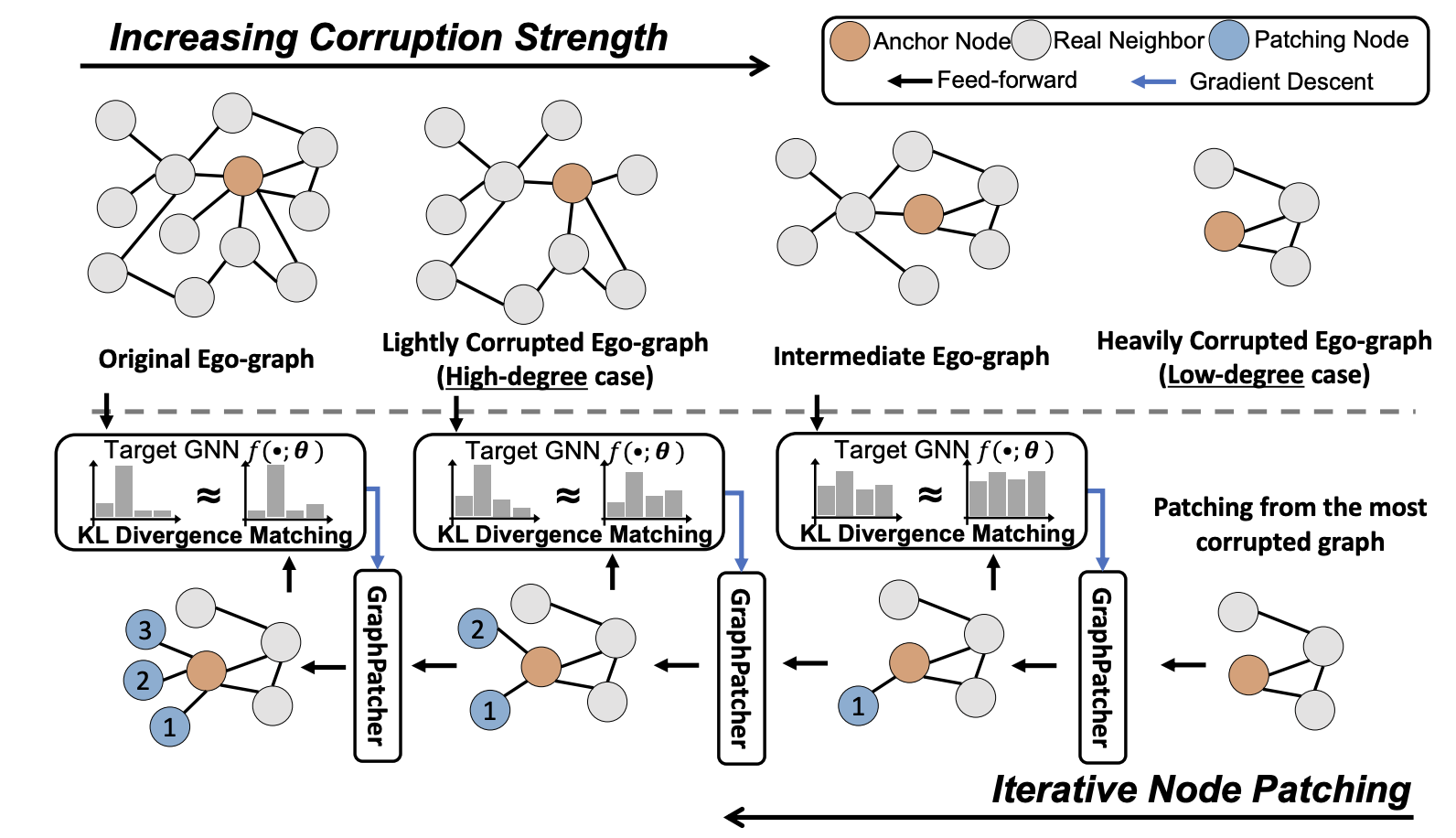

Node Duplication Improves Cold-start Link PredictionZhichun Guo, Tong Zhao, Yozen Liu, Kaiwen Dong, William Shiao, Neil Shah, and Nitesh V. ChawlaIn Transactions on Machine Learning Research, 2025

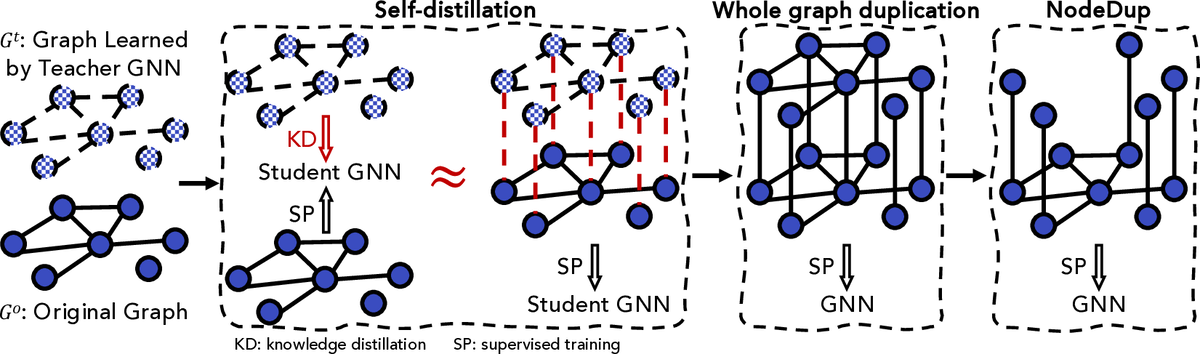

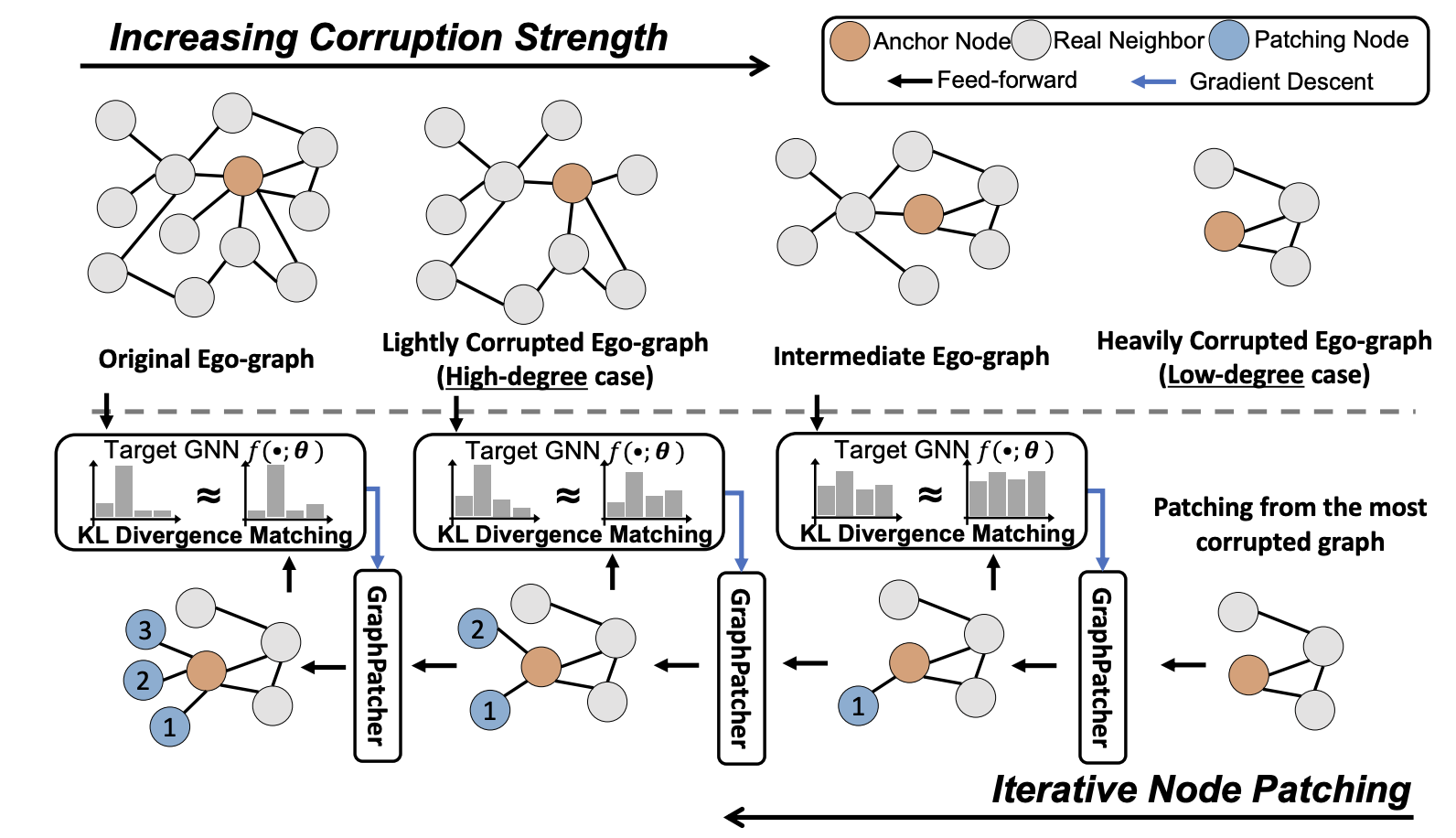

Node Duplication Improves Cold-start Link PredictionZhichun Guo, Tong Zhao, Yozen Liu, Kaiwen Dong, William Shiao, Neil Shah, and Nitesh V. ChawlaIn Transactions on Machine Learning Research, 2025Graph Neural Networks (GNNs) are prominent in graph machine learning and have shown state-of-the-art performance in Link Prediction (LP) tasks. Nonetheless, recent studies show that GNNs struggle to produce good results on low-degree nodes despite their overall strong performance. In practical applications of LP, like recommendation systems, improving performance on low-degree nodes is critical, as it amounts to tackling the cold-start problem of improving the experiences of users with few observed interactions. In this paper, we investigate improving GNNs’ LP performance on low-degree nodes while preserving their performance on high-degree nodes and propose a simple yet surprisingly effective augmentation technique called NodeDup. Specifically, NodeDup duplicates low-degree nodes and creates links between nodes and their own duplicates before following the standard supervised LP training scheme. By leveraging a ”multi-view” perspective for low-degree nodes, NodeDup shows significant LP performance improvements on low-degree nodes without compromising any performance on high-degree nodes. Additionally, as a plug-and-play augmentation module, NodeDup can be easily applied to existing GNNs with very light computational cost. Extensive experiments show that NodeDup achieves 38.49%, 13.34%, and 6.76% improvements on isolated, low-degree, and warm nodes, respectively, on average across all datasets compared to GNNs and state-of-the-art cold-start methods.

- DMLR

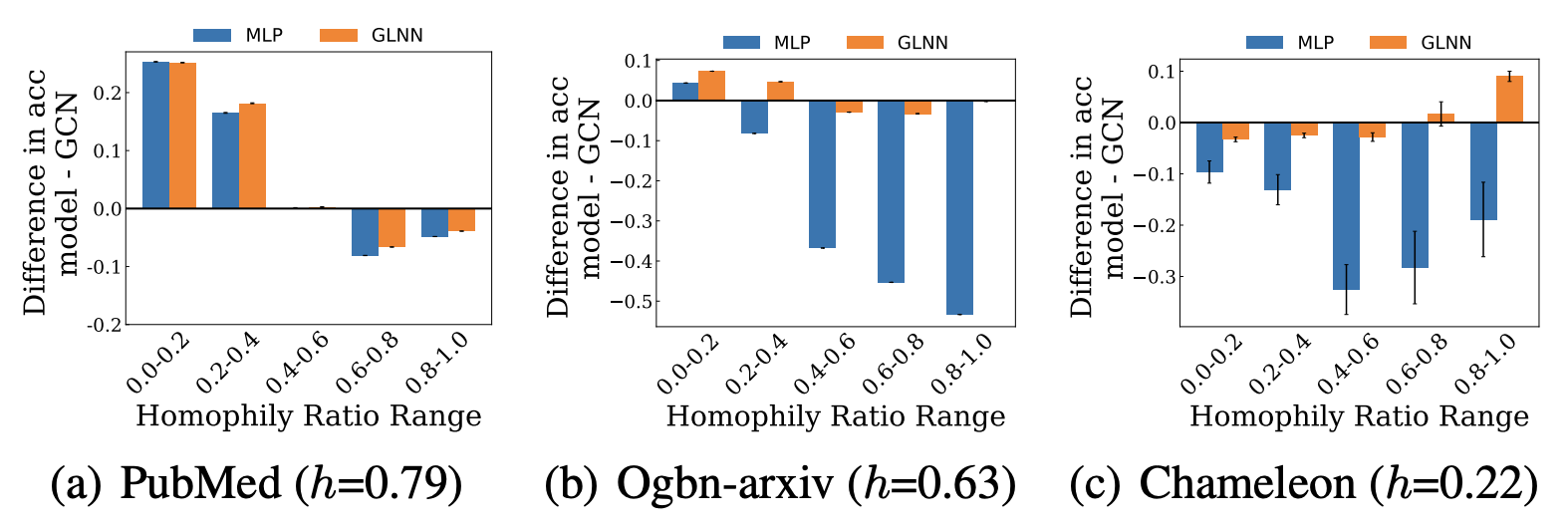

Do Graph Neural Networks Improve Node Representation Learning for All?Yushun Dong, William Shiao, Yozen Liu, Jundong Li, Neil Shah, and Tong ZhaoIn Data-Centric Machine Learning Research, 2025

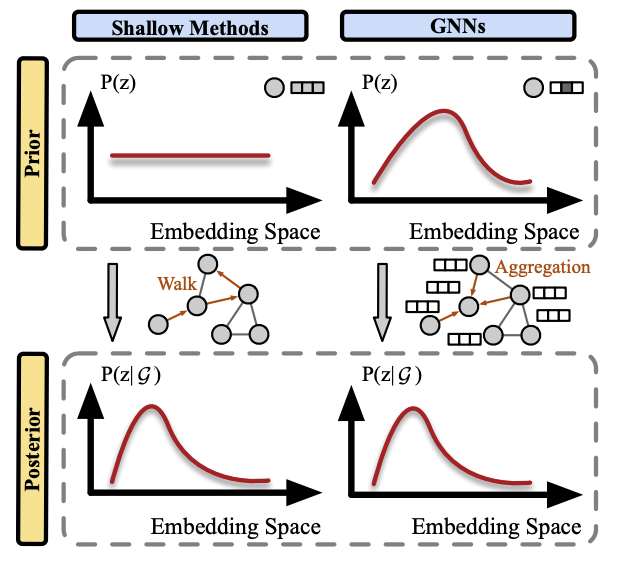

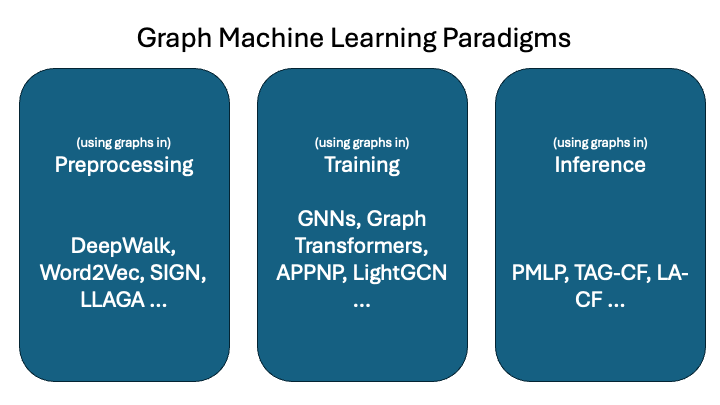

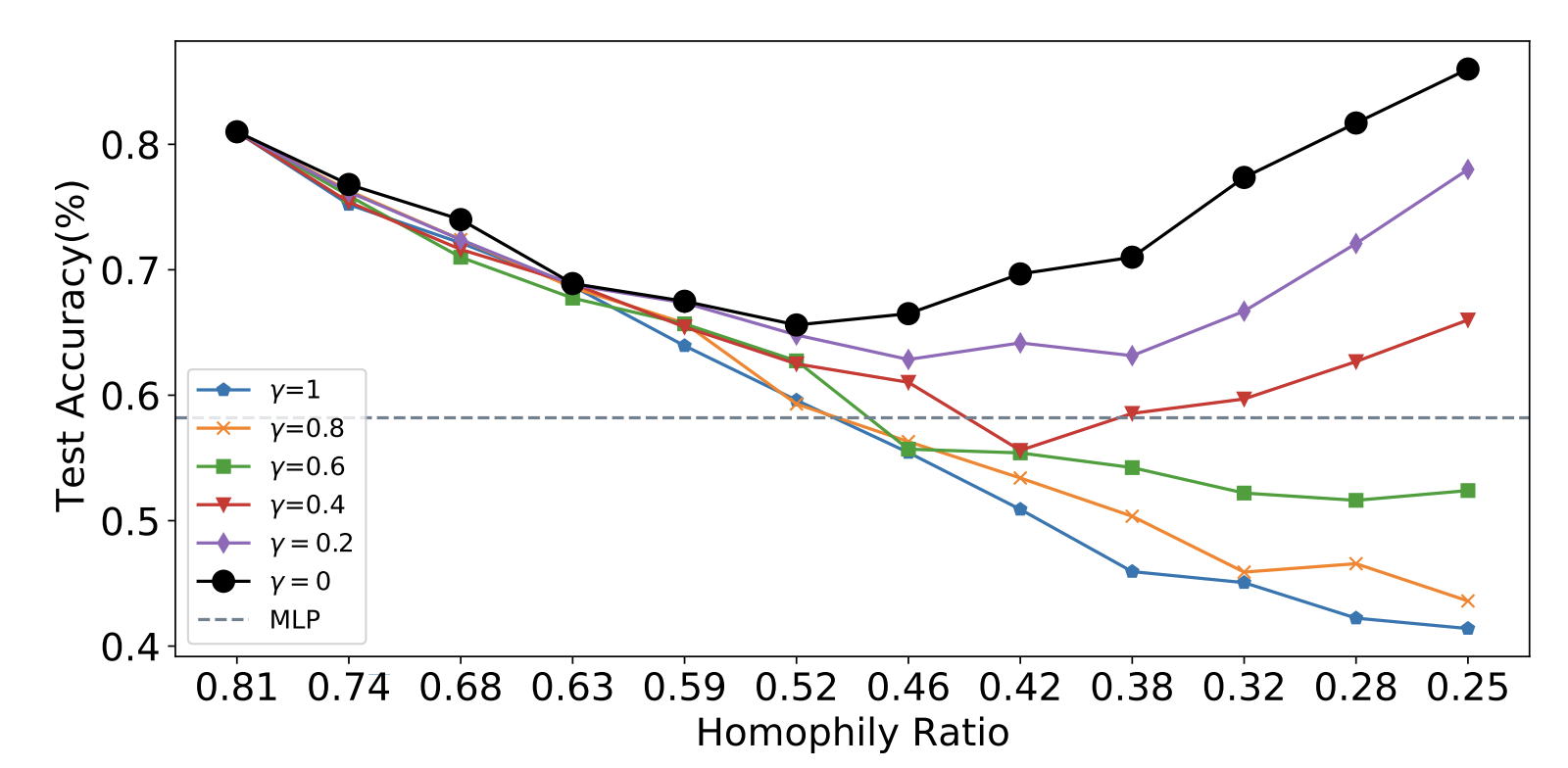

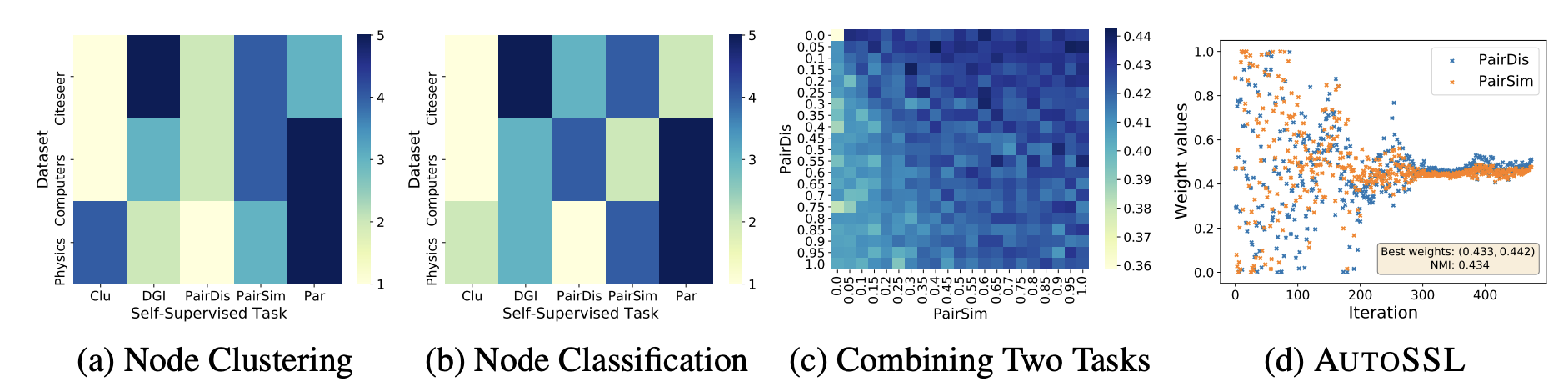

Do Graph Neural Networks Improve Node Representation Learning for All?Yushun Dong, William Shiao, Yozen Liu, Jundong Li, Neil Shah, and Tong ZhaoIn Data-Centric Machine Learning Research, 2025Graph Neural Networks (GNNs) have garnered increasing attention in recent years, given their significant proficiency in various graph learning tasks. Consequently, there has been a notable transition away from the conventional and prevalent shallow graph embedding methods which pre-dated GNNs. However, in tandem with this transition which is pre-supposed in the literature, an imperative question arises: do GNNs always outperform shallow embedding methods in node representation learning? This question remains inadequately explored, as the field of graph machine learning still lacks a systematic understanding of their relative strengths and limitations. To address this gap, we propose a principled framework that unifies the ideologies of representative shallow graph embedding methods and GNNs. With comparative analysis, we show that GNNs actually bear drawbacks that are typically not shared by shallow embedding methods. These drawbacks are often masked by data characteristics in commonly used benchmarks and thus not well-discussed in the literature, leading to potential suboptimal performance when GNNs are indiscriminately adopted in applications. We further show that our analysis can be generalized to GNNs under various learning paradigms, which provides further insights to emphasize the research significance of shallow embedding methods. Finally, with these insights, we conclude with a guide to meet various needs of researchers and practitioners.

- KDD

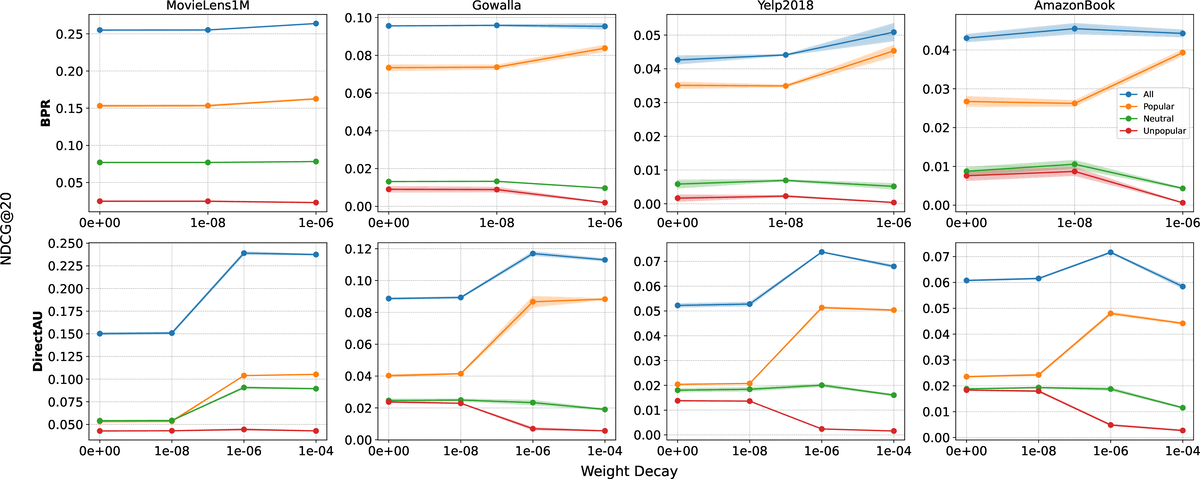

On the Role of Weight Decay in Collaborative Filtering: A Popularity PerspectiveDonald Loveland, Mingxuan Ju, Tong Zhao, Neil Shah, and Danai KoutraIn ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2025

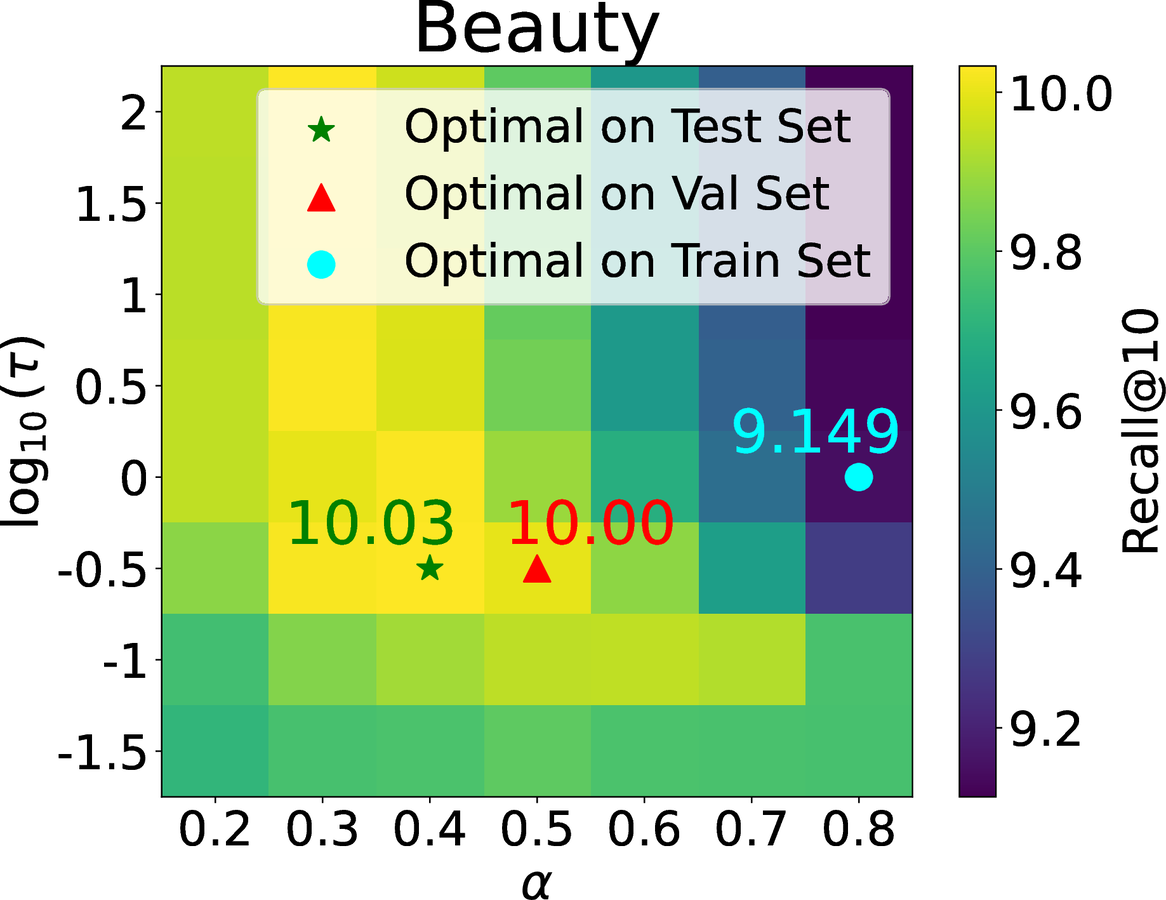

On the Role of Weight Decay in Collaborative Filtering: A Popularity PerspectiveDonald Loveland, Mingxuan Ju, Tong Zhao, Neil Shah, and Danai KoutraIn ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2025Collaborative filtering (CF) enables large-scale recommendation systems by encoding information from historical user-item interactions into dense ID-embedding tables. However, as embedding tables grow, closed-form solutions become impractical, often necessitating the use of mini-batch gradient descent for training. Despite extensive work on designing loss functions to train CF models, we argue that one core component of these pipelines is heavily overlooked: weight decay. Attaining high-performing models typically requires careful tuning of weight decay, regardless of loss, yet its necessity is not well understood. In this work, we question why weight decay is crucial in CF pipelines and how it impacts training. Through theoretical and empirical analysis, we surprisingly uncover that weight decay’s primary function is to encode popularity information into the magnitudes of the embedding vectors. Moreover, we find that tuning weight decay acts as a coarse, non-linear knob to influence preference towards popular or unpopular items. Based on these findings, we propose PRISM (Popularity-awaRe Initialization Strategy for embedding Magnitudes), a straightforward yet effective solution to simplify the training of high-performing CF models. PRISM pre-encodes the popularity information typically learned through weight decay, eliminating its necessity. Our experiments show that PRISM improves performance by up to 4.77% and reduces training times by 38.48%, compared to state-of-the-art training strategies. Additionally, we parameterize PRISM to modulate the initialization strength, offering a cost-effective and meaningful strategy to mitigate popularity bias.

- KDD

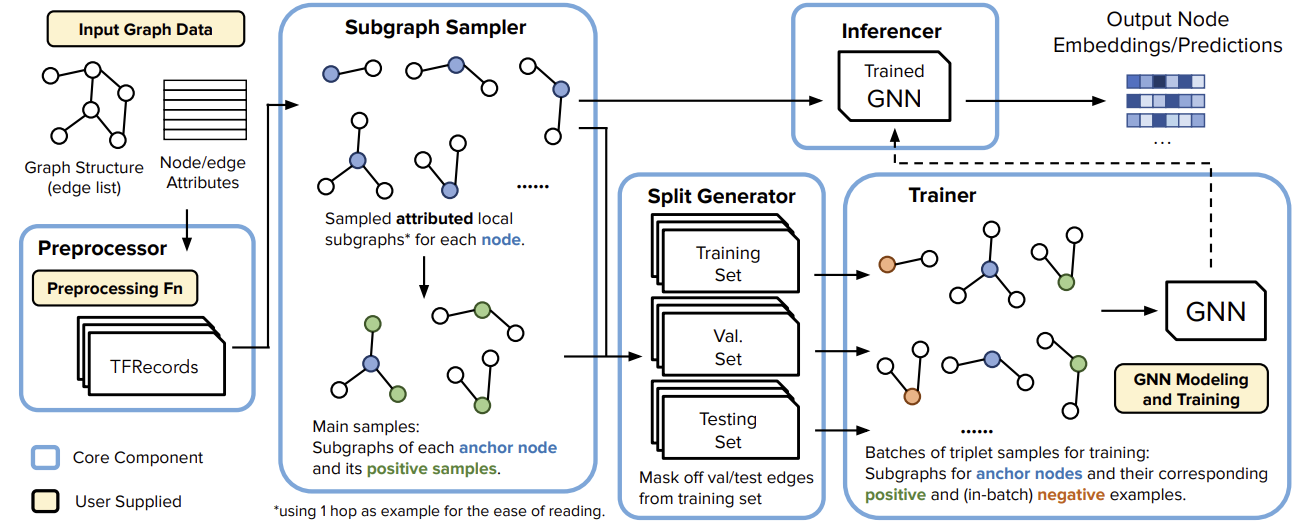

GiGL: Large-Scale Graph Neural Networks at SnapchatTong Zhao, Yozen Liu, Matthew Kolodner, Kyle Montemayor, Elham Ghazizadeh, Ankit Batra, Zihao Fan, Xiaobin Gao, and 7 more authorsIn ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2025

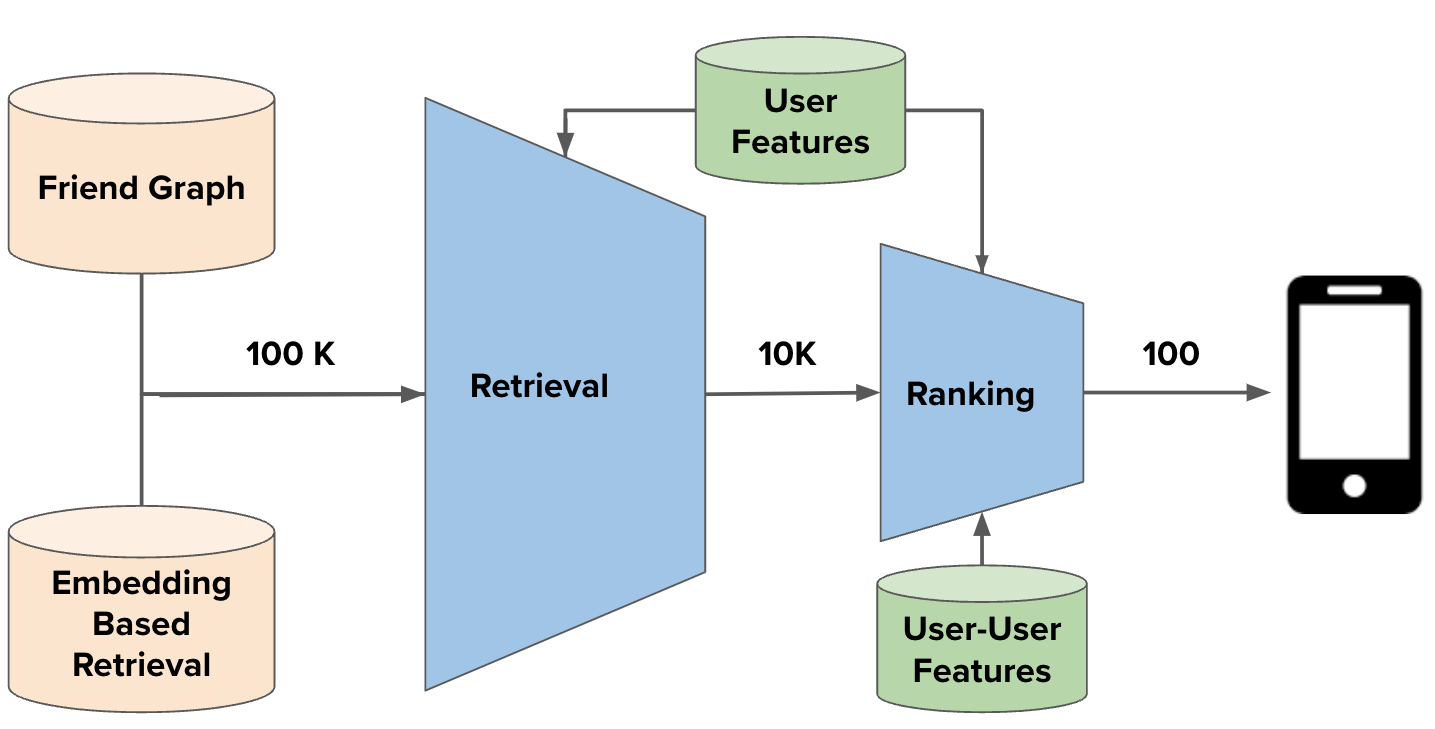

GiGL: Large-Scale Graph Neural Networks at SnapchatTong Zhao, Yozen Liu, Matthew Kolodner, Kyle Montemayor, Elham Ghazizadeh, Ankit Batra, Zihao Fan, Xiaobin Gao, and 7 more authorsIn ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2025Recent advances in graph machine learning (ML) with the introduction of Graph Neural Networks (GNNs) have led to a widespread interest in applying these approaches to business applications at scale. GNNs enable differentiable end-to-end (E2E) learning of model parameters given graph structure which enables optimization towards popular node, edge (link) and graph-level tasks. While the research innovation in new GNN layers and training strategies has been rapid, industrial adoption and utility of GNNs has lagged considerably due to the unique scale challenges that large-scale graph ML problems create. In this work, we share our approach to training, inference, and utilization of GNNs at Snapchat. To this end, we present GiGL (Gigantic Graph Learning), an open-source library to enable large-scale distributed graph ML to the benefit of researchers, ML engineers, and practitioners. We use GiGL internally at Snapchat to manage the heavy lifting of GNN workflows, including graph data preprocessing from relational DBs, subgraph sampling, distributed training, inference, and orchestration. GiGL is designed to interface cleanly with open-source GNN modeling libraries prominent in academia like PyTorch Geometric (PyG), while handling scaling and productionization challenges that make it easier for internal practitioners to focus on modeling. GiGL is used in multiple production settings, and has powered over 35 launches across multiple business domains in the last 2 years in the contexts of friend recommendation, content recommendation and advertising. This work details high-level design and tools the library provides, scaling properties, case studies in diverse business settings with industry-scale graphs, and several key lessons learned in employing graph ML at scale on large social data. GiGL is open-sourced at https://github.com/Snapchat/GiGL.

- KDD

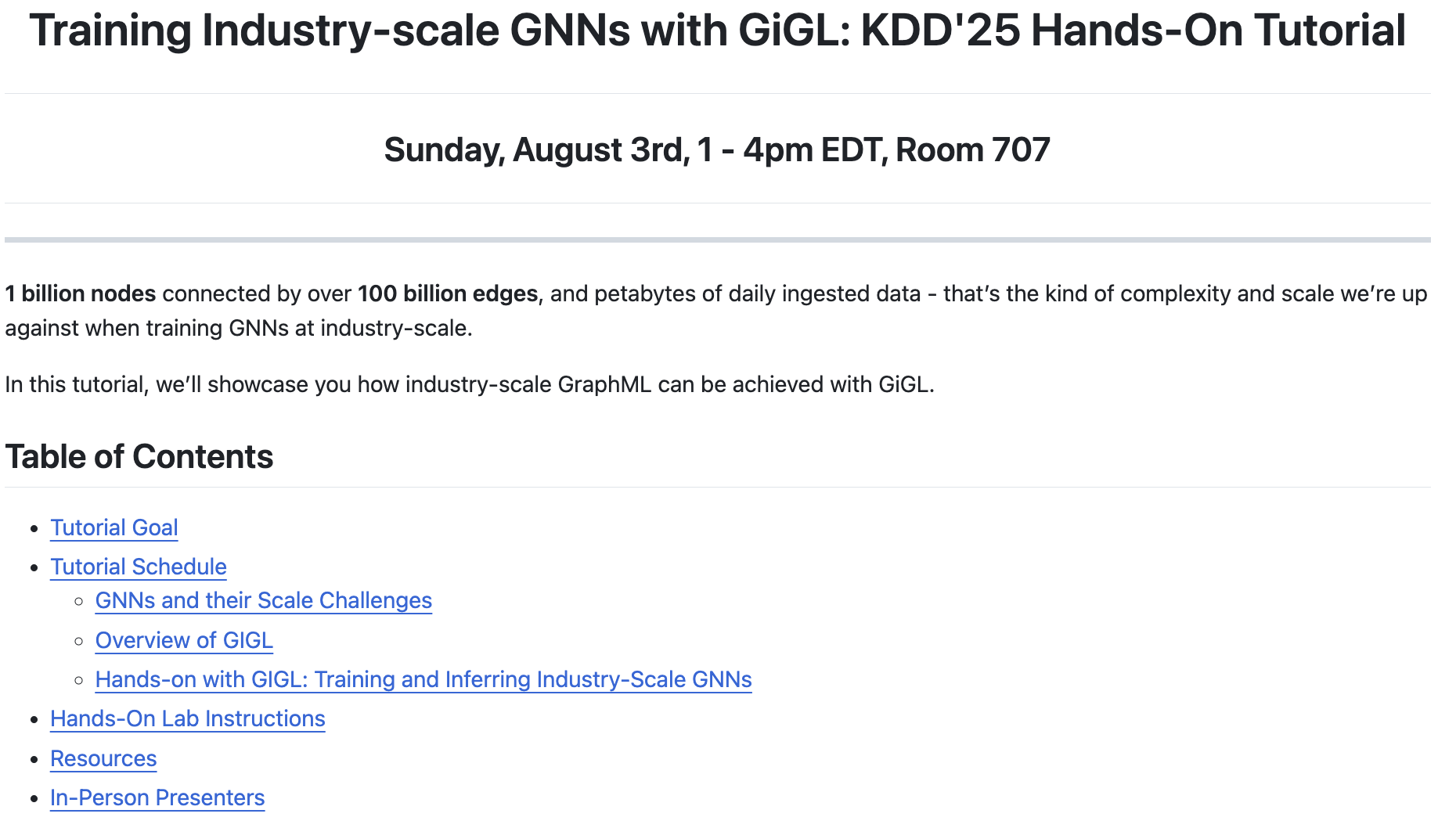

Training Industry-Scale Graph Neural Networks with GiGLYozen Liu, Tong Zhao, Matthew Kolodner, Kyle Montemayor, Shubham Vij, and Neil ShahIn ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2025

Training Industry-Scale Graph Neural Networks with GiGLYozen Liu, Tong Zhao, Matthew Kolodner, Kyle Montemayor, Shubham Vij, and Neil ShahIn ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2025Recent advances in graph machine learning (GML) and Graph Neu- ral Networks (GNNs) have sparked significant practical interest given the ability to model complex relationships between entities. Despite rapid progress in GNN designs, scalability remains a major challenge. Industry applications require solutions that can handle graphs with billions of nodes and edges efficiently. GiGL (Gigantic Graph Learning) is an open-source library from Snapchat, designed for large-scale distributed training and inference with GNNs. It seamlessly integrates with popular open-source GNN libraries like PyTorch Geometric (PyG). GiGL provides simplified configurable interfaces with minimal modeling code requirements, providing in- dustrial practitioners a straightforward way to apply GNNs to large- scale applications and enabling academics to conduct large-scale experiments. At the same time, it enables complex modeling capabil- ities desirable for modeling iteration. In this hands-on tutorial, we will demonstrate how GiGL addresses the scalability challenge in GNNs and provide a step-by-step guide for attendees to complete end-to-end training and inference with GiGL on industry-scale graphs. By the end of our tutorial, participants will have hands-on experience in training GNNs on graphs with billions of nodes and edges - capabilities not easily achievable with open-source graph learning libraries like PyG alone. We anticipate strong interest and participation from both industrial practitioners working on GNN applications and academics conducting large-scale experiments.

- KDD

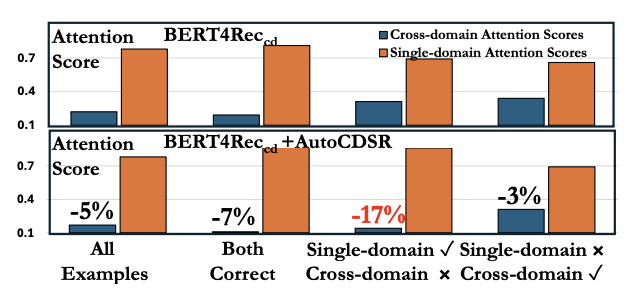

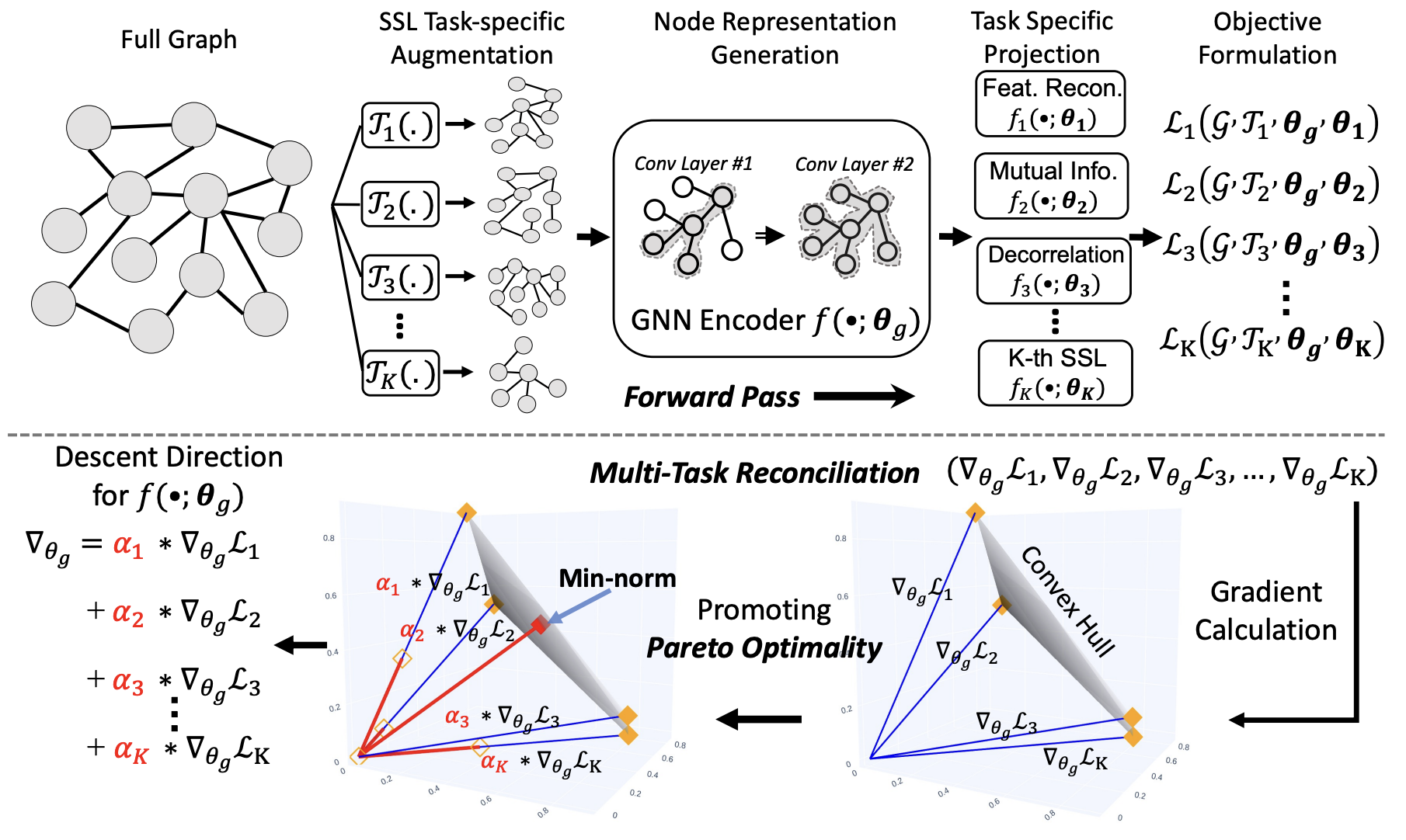

Revisiting Self-Attention for Cross-Domain Sequential RecommendationMingxuan Ju, Leonardo Neves, Bhuvesh Kumar, Liam Collins, Tong Zhao, Yuwei Qiu, Qing Dou, Sohail Nizam, and 2 more authorsIn ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2025

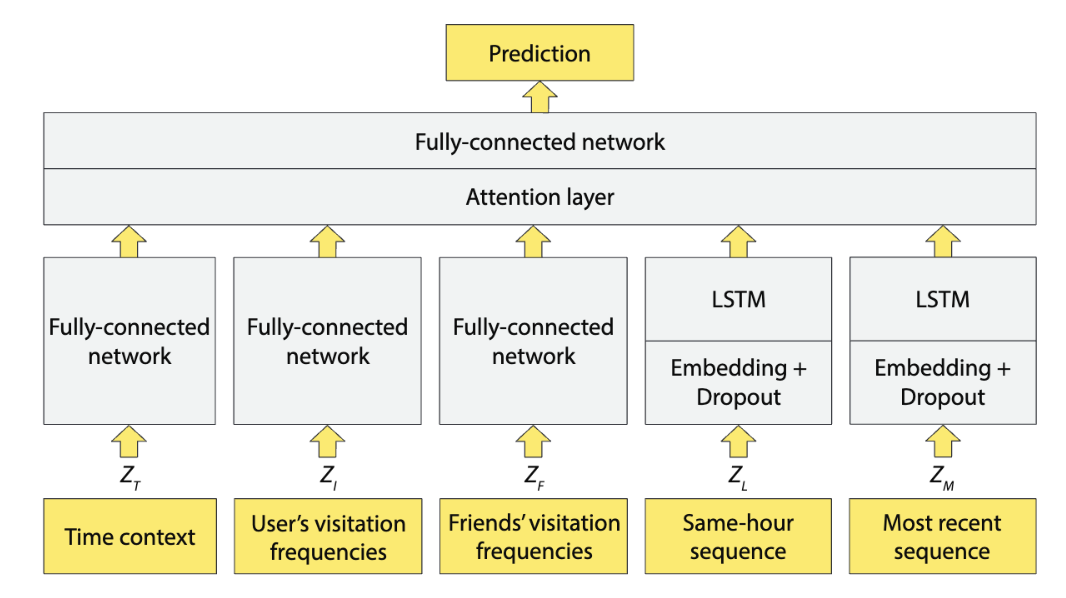

Revisiting Self-Attention for Cross-Domain Sequential RecommendationMingxuan Ju, Leonardo Neves, Bhuvesh Kumar, Liam Collins, Tong Zhao, Yuwei Qiu, Qing Dou, Sohail Nizam, and 2 more authorsIn ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2025Sequential recommendation is a popular paradigm in modern recommender systems. In particular, one challenging problem in this space is cross-domain sequential recommendation (CDSR), which aims to predict future behaviors given user interactions across multiple domains. Existing CDSR frameworks are mostly built on the self-attention transformer and seek to improve by explicitly injecting additional domain-specific components (e.g. domain-aware module blocks). While these additional components help, we argue they overlook the core self-attention module already present in the transformer, a naturally powerful tool to learn correlations among behaviors. In this work, we aim to improve the CDSR performance for simple models from a novel perspective of enhancing the self-attention. Specifically, we introduce a Pareto-optimal self-attention and formulate the cross-domain learning as a multi-objective problem, where we optimize the recommendation task while dynamically minimizing the cross-domain attention scores. Our approach automates knowledge transfer in CDSR (dubbed as AutoCDSR) – it not only mitigates negative transfer but also encourages complementary knowledge exchange among auxiliary domains. Based on the idea, we further introduce AutoCDSR+, a more performant variant with slight additional cost. Our proposal is easy to implement and works as a plug-and-play module that can be incorporated into existing transformer-based recommenders. Besides flexibility, it is practical to deploy because it brings little extra computational overheads without heavy hyper-parameter tuning. AutoCDSR on average improves Recall@10 for SASRec and Bert4Rec by 9.8% and 16.0% and NDCG@10 by 12.0% and 16.7%, respectively. Code is available at https://github.com/snap-research/AutoCDSR.

- preprint

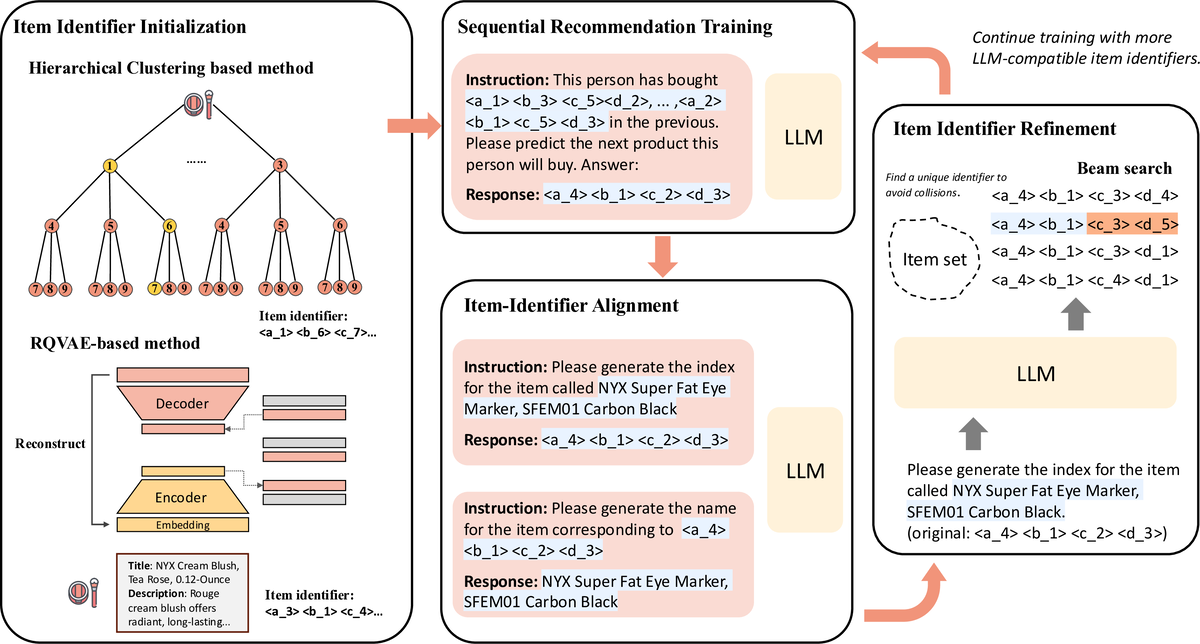

Enhancing Item Tokenization for Generative Recommendation through Self-ImprovementRunjin Chen, Mingxuan Ju, Ngoc Bui, Dimosthenis Antypas, Stanley Cai, Xiaopeng Wu, Leonardo Neves, Zhangyang Wang, and 2 more authorsarXiv preprint, 2025

Enhancing Item Tokenization for Generative Recommendation through Self-ImprovementRunjin Chen, Mingxuan Ju, Ngoc Bui, Dimosthenis Antypas, Stanley Cai, Xiaopeng Wu, Leonardo Neves, Zhangyang Wang, and 2 more authorsarXiv preprint, 2025Generative recommendation systems, driven by large language models (LLMs), present an innovative approach to predicting user preferences by modeling items as token sequences and generating recommendations in a generative manner. A critical challenge in this approach is the effective tokenization of items, ensuring that they are represented in a form compatible with LLMs. Current item tokenization methods include using text descriptions, numerical strings, or sequences of discrete tokens. While text-based representations integrate seamlessly with LLM tokenization, they are often too lengthy, leading to inefficiencies and complicating accurate generation. Numerical strings, while concise, lack semantic depth and fail to capture meaningful item relationships. Tokenizing items as sequences of newly defined tokens has gained traction, but it often requires external models or algorithms for token assignment. These external processes may not align with the LLM’s internal pretrained tokenization schema, leading to inconsistencies and reduced model performance. To address these limitations, we propose a self-improving item tokenization method that allows the LLM to refine its own item tokenizations during training process. Our approach starts with item tokenizations generated by any external model and periodically adjusts these tokenizations based on the LLM’s learned patterns. Such alignment process ensures consistency between the tokenization and the LLM’s internal understanding of the items, leading to more accurate recommendations. Furthermore, our method is simple to implement and can be integrated as a plug-and-play enhancement into existing generative recommendation systems. Experimental results on multiple datasets and using various initial tokenization strategies demonstrate the effectiveness of our method, with an average improvement of 8% in recommendation performance.

- SIRIP

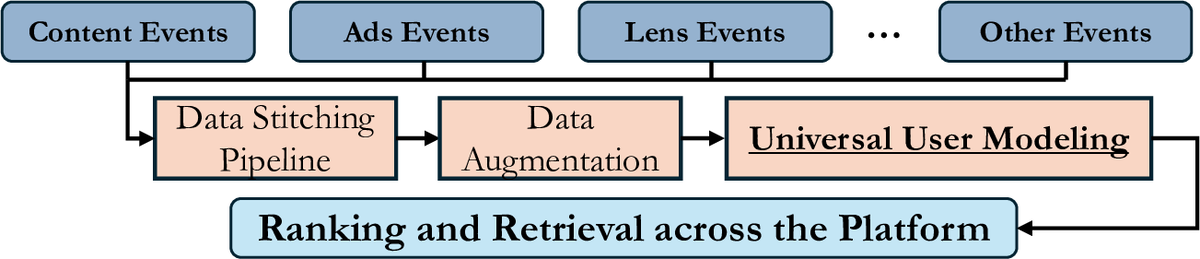

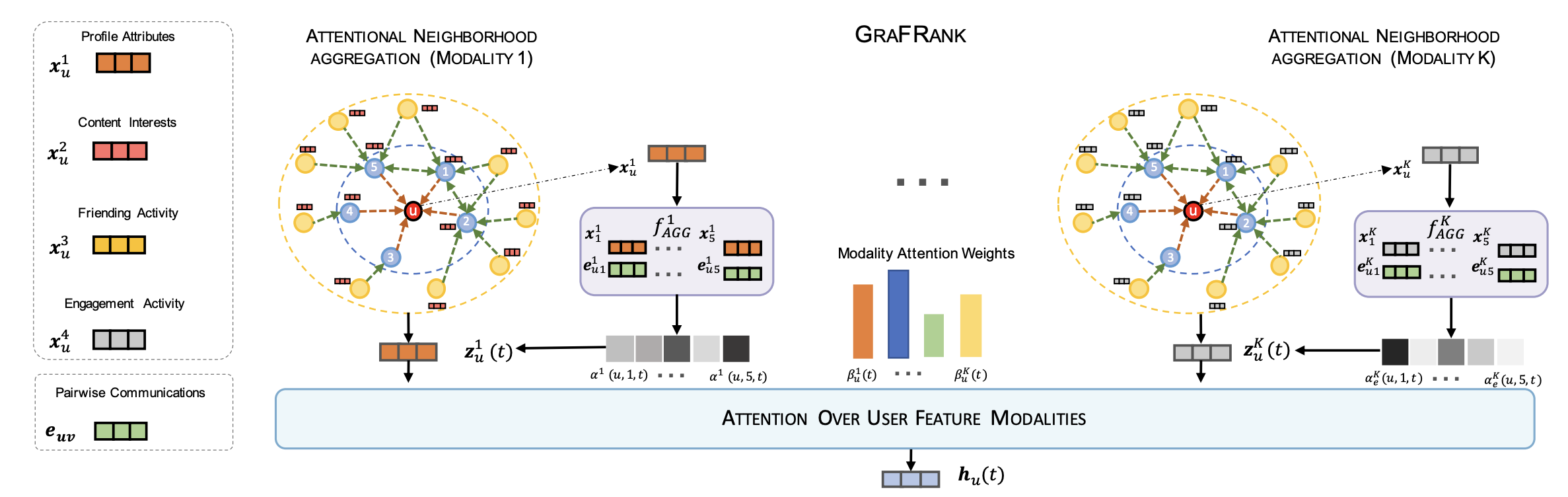

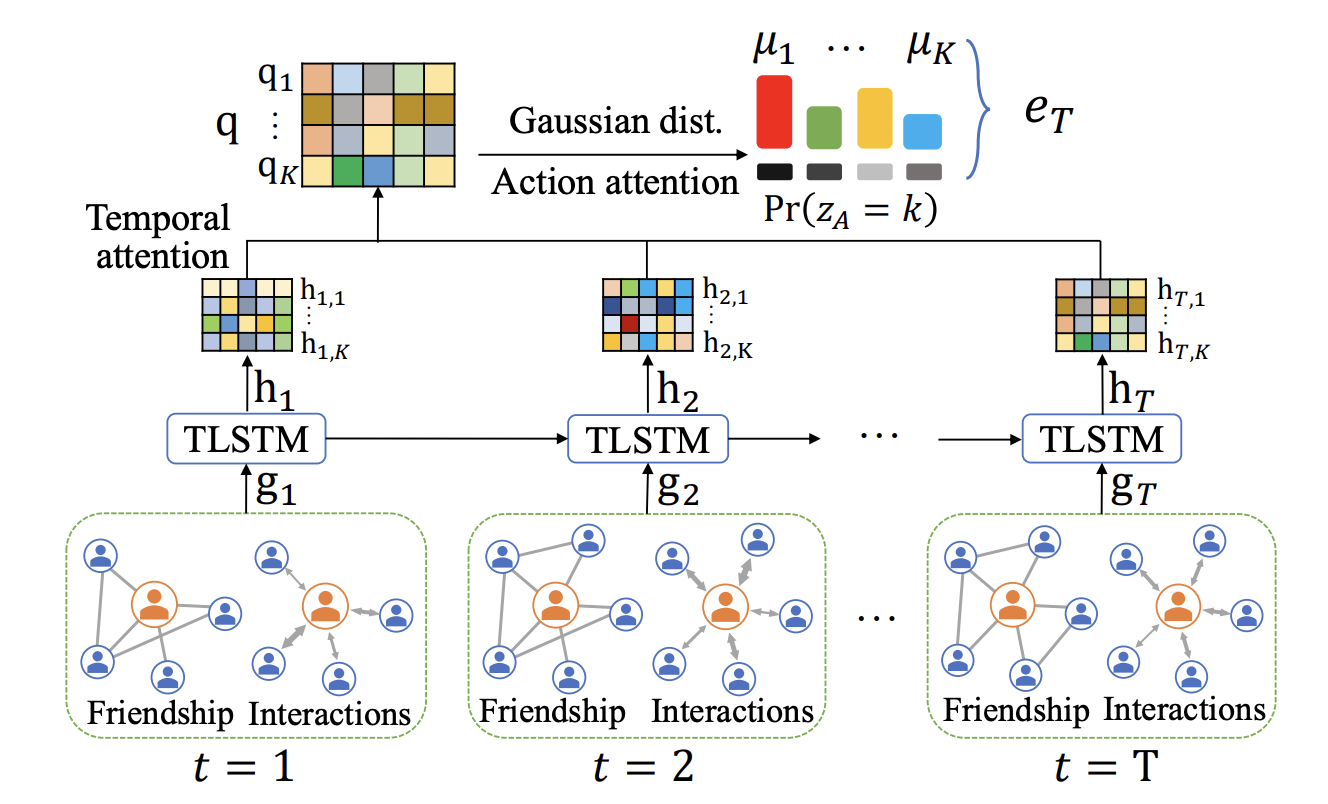

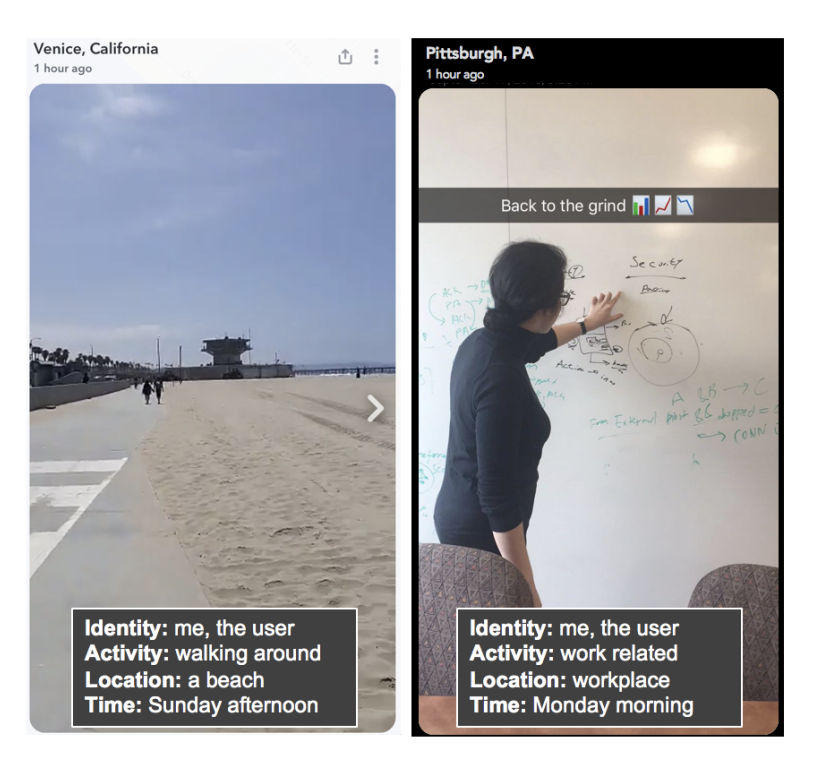

Learning Universal User Representations Leveraging Cross-domain User Intent at SnapchatMingxuan Ju, Leonardo Neves, Bhuvesh Kumar, Liam Collins, Tong Zhao, Yuwei Qiu, Ching Dou, Yang Zhou, and 6 more authorsIn ACM SIGIR Conference on Research and Development in Information Retrieval, 2025

Learning Universal User Representations Leveraging Cross-domain User Intent at SnapchatMingxuan Ju, Leonardo Neves, Bhuvesh Kumar, Liam Collins, Tong Zhao, Yuwei Qiu, Ching Dou, Yang Zhou, and 6 more authorsIn ACM SIGIR Conference on Research and Development in Information Retrieval, 2025The development of powerful user representations is a key factor in the success of recommender systems (RecSys). Online platforms employ a range of RecSys techniques to personalize user experience across diverse in-app surfaces. User representations are often learned individually through user’s historical interactions within each surface and user representations across different surfaces can be shared post-hoc as auxiliary features or additional retrieval sources. While effective, such schemes cannot directly encode collaborative filtering signals across different surfaces, hindering its capacity to discover complex relationships between user behaviors and preferences across the whole platform. To bridge this gap at Snapchat, we seek to conduct universal user modeling (UUM) across different in-app surfaces, learning general-purpose user representations which encode behaviors across surfaces. Instead of replacing domain-specific representations, UUM representations capture cross-domain trends, enriching existing representations with complementary information. This work discusses our efforts in developing initial UUM versions, practical challenges, technical choices and modeling and research directions with promising offline performance. Following successful A/B testing, UUM representations have been launched in production, powering multiple use cases and demonstrating their value. UUM embedding has been incorporated into (i) Long-form Video embedding-based retrieval, leading to 2.78% increase in Long-form Video Open Rate, (ii) Long-form Video L2 ranking, with 19.2% increase in Long-form Video View Time sum, (iii) Lens L2 ranking, leading to 1.76% increase in Lens play time, and (iv) Notification L2 ranking, with 0.87% increase in Notification Open Rate.

- ICML

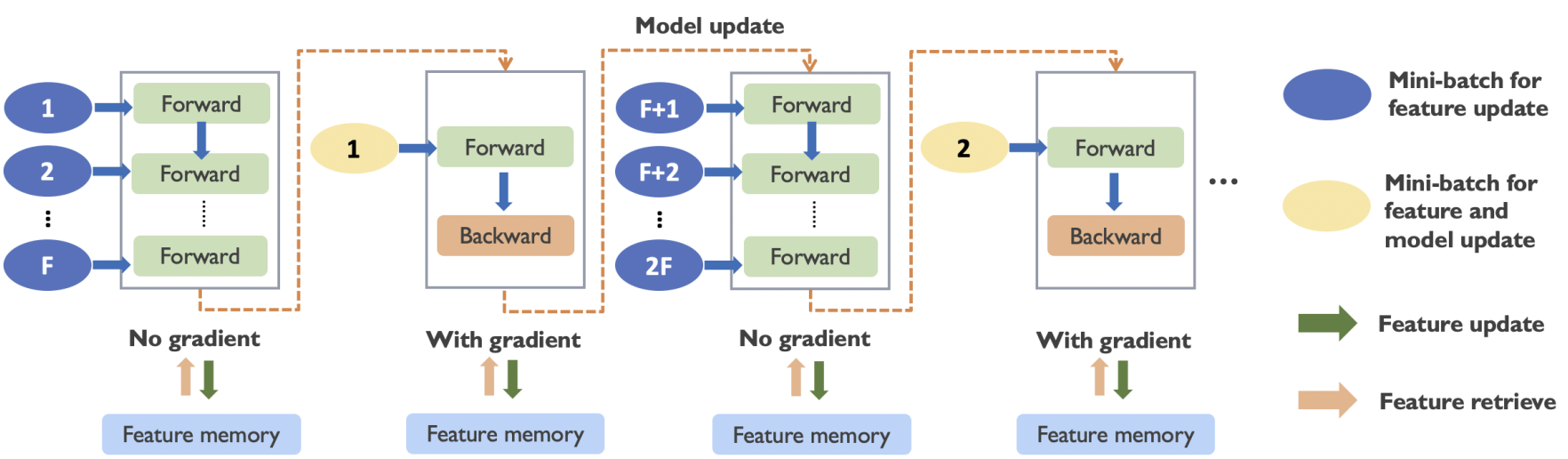

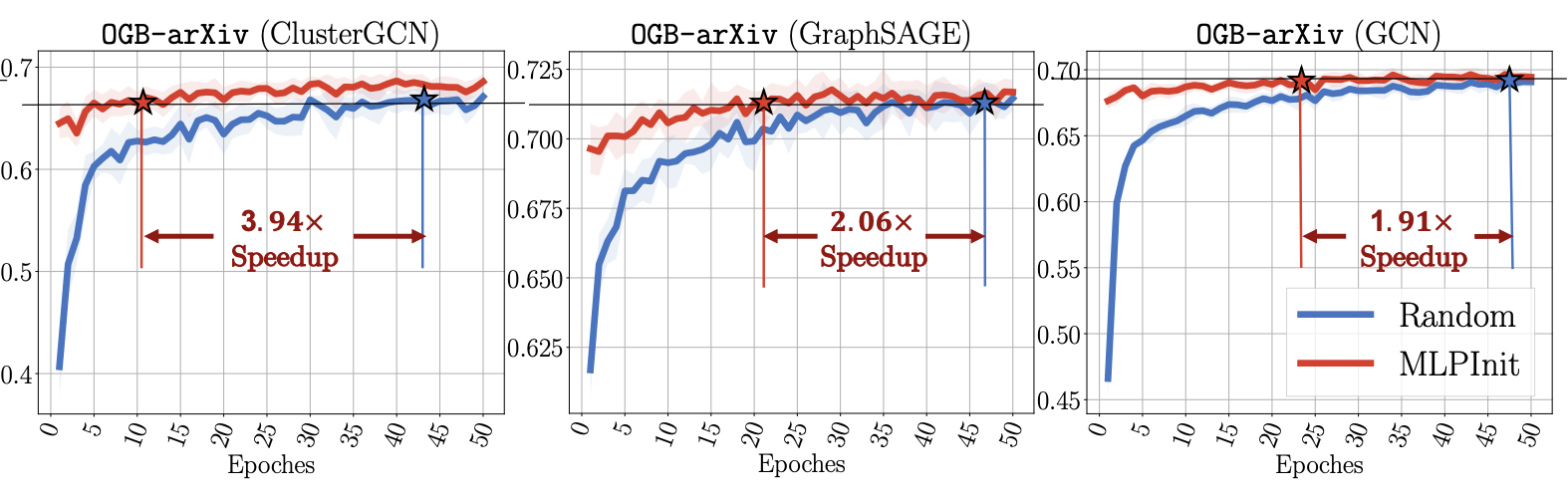

Haste Makes Waste: A Simple Approach for Scaling Graph Neural NetworksRui Xue, Tong Zhao, Neil Shah, and Xiaorui LiuIn International Conference on Machine Learning, 2025

Haste Makes Waste: A Simple Approach for Scaling Graph Neural NetworksRui Xue, Tong Zhao, Neil Shah, and Xiaorui LiuIn International Conference on Machine Learning, 2025Graph neural networks (GNNs) have demonstrated remarkable success in graph representation learning, and various sampling approaches have been proposed to scale GNNs to applications with large-scale graphs. A class of promising GNN training algorithms take advantage of historical embeddings to reduce the computation and memory cost while maintaining the model expressiveness of GNNs. However, they incur significant computation bias due to the stale feature history. In this paper, we provide a comprehensive analysis of their staleness and inferior performance on large-scale problems. Motivated by our discoveries, we propose a simple yet highly effective training algorithm (REST) to effectively reduce feature staleness, which leads to significantly improved performance and convergence across varying batch sizes. The proposed algorithm seamlessly integrates with existing solutions, boasting easy implementation, while comprehensive experiments underscore its superior performance and efficiency on large-scale benchmarks. Specifically, our improvements to state-of-the-art historical embedding methods result in a 2.7% and 3.6% performance enhancement on the ogbn-papers100M and ogbn-products dataset respectively, accompanied by notably accelerated convergence.

- ICML

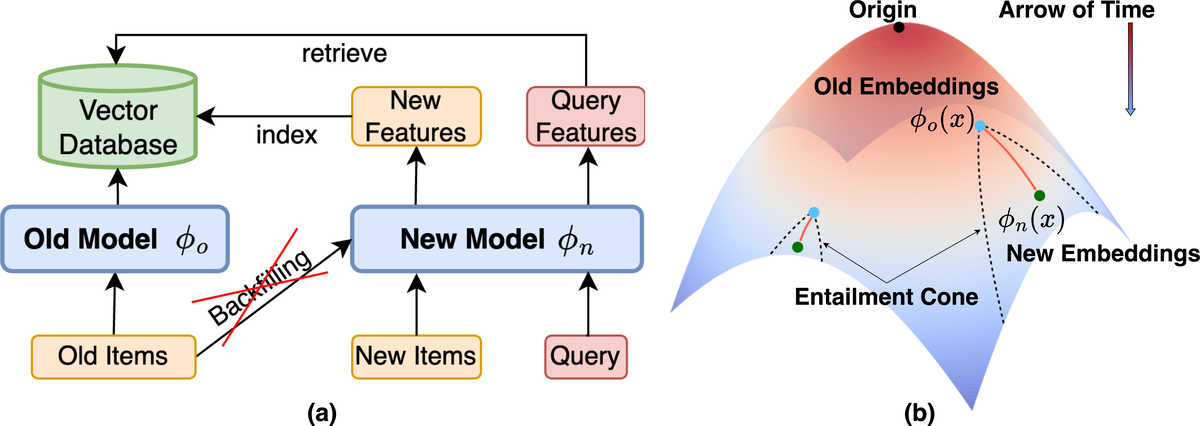

Hyperbolic Geometry for Backward-Compatible Representation LearningNgoc Bui, Menglin Yang, Runjin Chen, Leonardo Neves, Clark Ju, Rex Ying, Neil Shah, and Tong ZhaoIn International Conference on Machine Learning, 2025

Hyperbolic Geometry for Backward-Compatible Representation LearningNgoc Bui, Menglin Yang, Runjin Chen, Leonardo Neves, Clark Ju, Rex Ying, Neil Shah, and Tong ZhaoIn International Conference on Machine Learning, 2025Backward compatible representation learning enables updated models to integrate seamlessly with existing ones, avoiding to reprocess stored data. Despite recent advances, existing compatibility approaches in Euclidean space neglect the uncertainty in the old embedding model and force the new model to reconstruct outdated representations regardless of their quality, thereby hindering the learning process of the new model. In this paper, we propose to switch perspectives to hyperbolic geometry, where we treat time as a natural axis for capturing a model’s confidence and evolution. By lifting embeddings into hyperbolic space and constraining updated embeddings to lie within the entailment cone of the old ones, we maintain generational consistency across models while accounting for uncertainties in the representations. To further enhance compatibility, we introduce a robust contrastive alignment loss that dynamically adjusts alignment weights based on the uncertainty of the old embeddings. Experiments validate the superiority of the proposed method in achieving compatibility, paving the way for more resilient and adaptable machine learning systems.

- TheWebConf

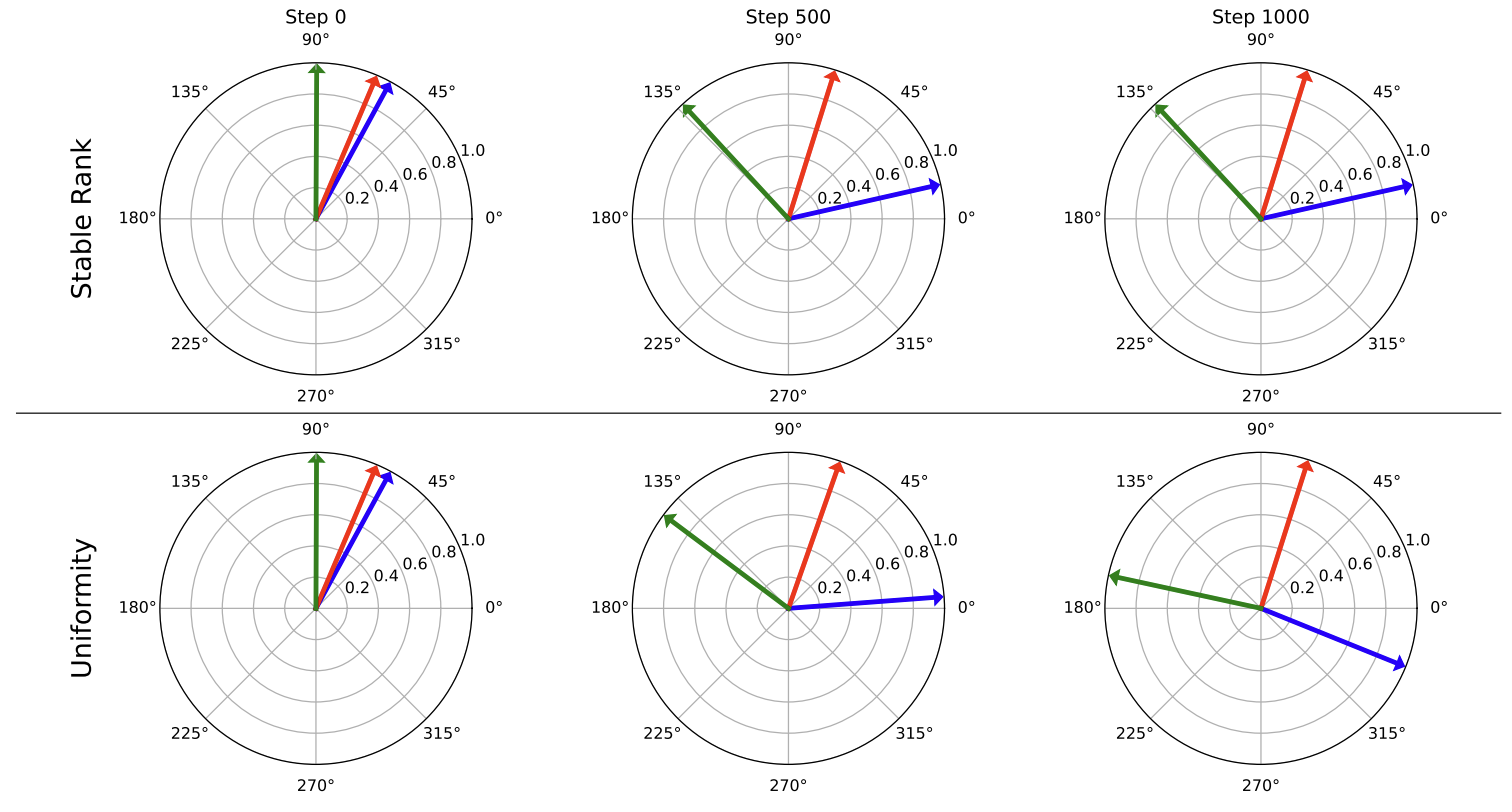

Understanding and Scaling Collaborative Filtering Optimization from the Perspective of Matrix RankDonald Loveland, Xinyi Wu, Tong Zhao, Danai Koutra, Neil Shah, and Mingxuan JuIn The Web Conference, 2025

Understanding and Scaling Collaborative Filtering Optimization from the Perspective of Matrix RankDonald Loveland, Xinyi Wu, Tong Zhao, Danai Koutra, Neil Shah, and Mingxuan JuIn The Web Conference, 2025Collaborative Filtering (CF) methods dominate real-world recommender systems given their ability to learn high-quality, sparse ID-embedding tables that effectively capture user preferences. These tables scale linearly with the number of users and items, and are trained to ensure high similarity between embeddings of interacted user-item pairs, while maintaining low similarity for non-interacted pairs. Despite their high performance, encouraging dispersion for non-interacted pairs necessitates expensive regularization (e.g., negative sampling), hurting runtime and scalability. Existing research tends to address these challenges by simplifying the learning process, either by reducing model complexity or sampling data, trading performance for runtime. In this work, we move beyond model-level modifications and study the properties of the embedding tables under different learning strategies. Through theoretical analysis, we find that the singular values of the embedding tables are intrinsically linked to different CF loss functions. These findings are empirically validated on real-world datasets, demonstrating the practical benefits of higher stable rank, a continuous version of matrix rank which encodes the distribution of singular values. Based on these insights, we propose an efficient warm-start strategy that regularizes the stable rank of the user and item embeddings. We show that stable rank regularization during early training phases can promote higher-quality embeddings, resulting in training speed improvements of up to 66%. Additionally, stable rank regularization can act as a proxy for negative sampling, allowing for performance gains of up to 21% over loss functions with small negative sampling ratios. Overall, our analysis unifies current CF methods under a new perspective, their optimization of stable rank, motivating a flexible regularization method.

- TheWebConf

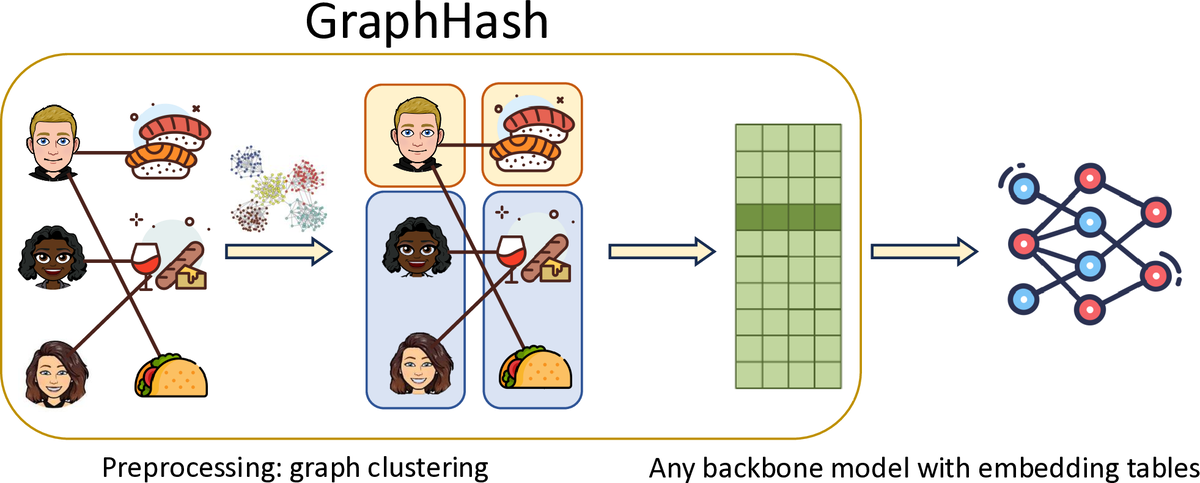

GraphHash: Graph Clustering Enables Parameter Efficiency in Recommender SystemsXinyi Wu, Donald Loveland, Runjin Chen, Yozen Liu, Xin Chen, Leonardo Neves, Ali Jadbabaie, Mingxuan Ju, and 2 more authorsIn The Web Conference, 2025

GraphHash: Graph Clustering Enables Parameter Efficiency in Recommender SystemsXinyi Wu, Donald Loveland, Runjin Chen, Yozen Liu, Xin Chen, Leonardo Neves, Ali Jadbabaie, Mingxuan Ju, and 2 more authorsIn The Web Conference, 2025Deep recommender systems rely heavily on large embedding tables to handle high-cardinality categorical features such as user/item identifiers, and face significant memory constraints at scale. To tackle this challenge, hashing techniques are often employed to map multiple entities to the same embedding and thus reduce the size of the embedding tables. Concurrently, graph-based collaborative signals have emerged as powerful tools in recommender systems, yet their potential for optimizing embedding table reduction remains unexplored. This paper introduces GraphHash, the first graph-based approach that leverages modularity-based bipartite graph clustering on user-item interaction graphs to reduce embedding table sizes. We demonstrate that the modularity objective has a theoretical connection to message-passing, which provides a foundation for our method. By employing fast clustering algorithms, GraphHash serves as a computationally efficient proxy for message-passing during preprocessing and a plug-and-play graph-based alternative to traditional ID hashing. Extensive experiments show that GraphHash substantially outperforms diverse hashing baselines on both retrieval and click-through-rate prediction tasks. In particular, GraphHash achieves on average a 101.52% improvement in recall when reducing the embedding table size by more than 75%, highlighting the value of graph-based collaborative information for model reduction. Our code is available at https://github.com/snap-research/GraphHash.

- CVPR

Mosaic of Modalities: A Comprehensive Benchmark for Multimodal Graph LearningJing Zhu, Yuhang Zhou, Shengyi Qian, Zhongmou He, Tong Zhao, Neil Shah, and Danai KoutraIn Conference on Computer Vision and Pattern Recognition, 2025

Mosaic of Modalities: A Comprehensive Benchmark for Multimodal Graph LearningJing Zhu, Yuhang Zhou, Shengyi Qian, Zhongmou He, Tong Zhao, Neil Shah, and Danai KoutraIn Conference on Computer Vision and Pattern Recognition, 2025Graph machine learning has made significant strides in recent years, yet the integration of visual information with graph structure and its potential for improving performance in downstream tasks remains an underexplored area. To address this critical gap, we introduce the Multimodal Graph Benchmark (MM-GRAPH), a pioneering benchmark that incorporates both visual and textual information into graph learning tasks. MM-GRAPH extends beyond existing text-attributed graph benchmarks, offering a more comprehensive evaluation framework for multimodal graph learning Our benchmark comprises seven diverse datasets of varying scales (ranging from thousands to millions of edges), designed to assess algorithms across different tasks in real-world scenarios. These datasets feature rich multimodal node attributes, including visual data, which enables a more holistic evaluation of various graph learning frameworks in complex, multimodal environments. To support advancements in this emerging field, we provide an extensive empirical study on various graph learning frameworks when presented with features from multiple modalities, particularly emphasizing the impact of visual information. This study offers valuable insights into the challenges and opportunities of integrating visual data into graph learning.

- preprint

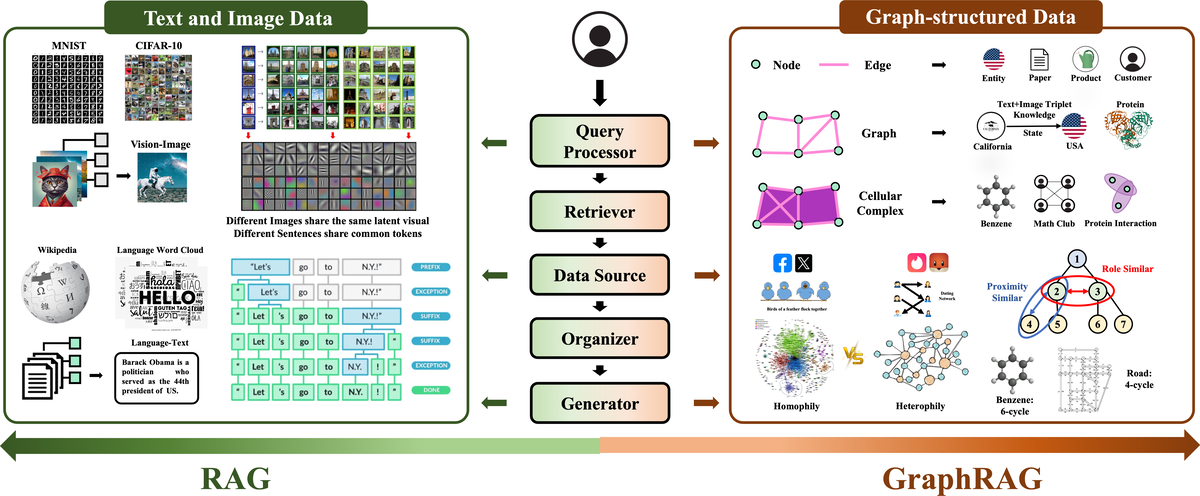

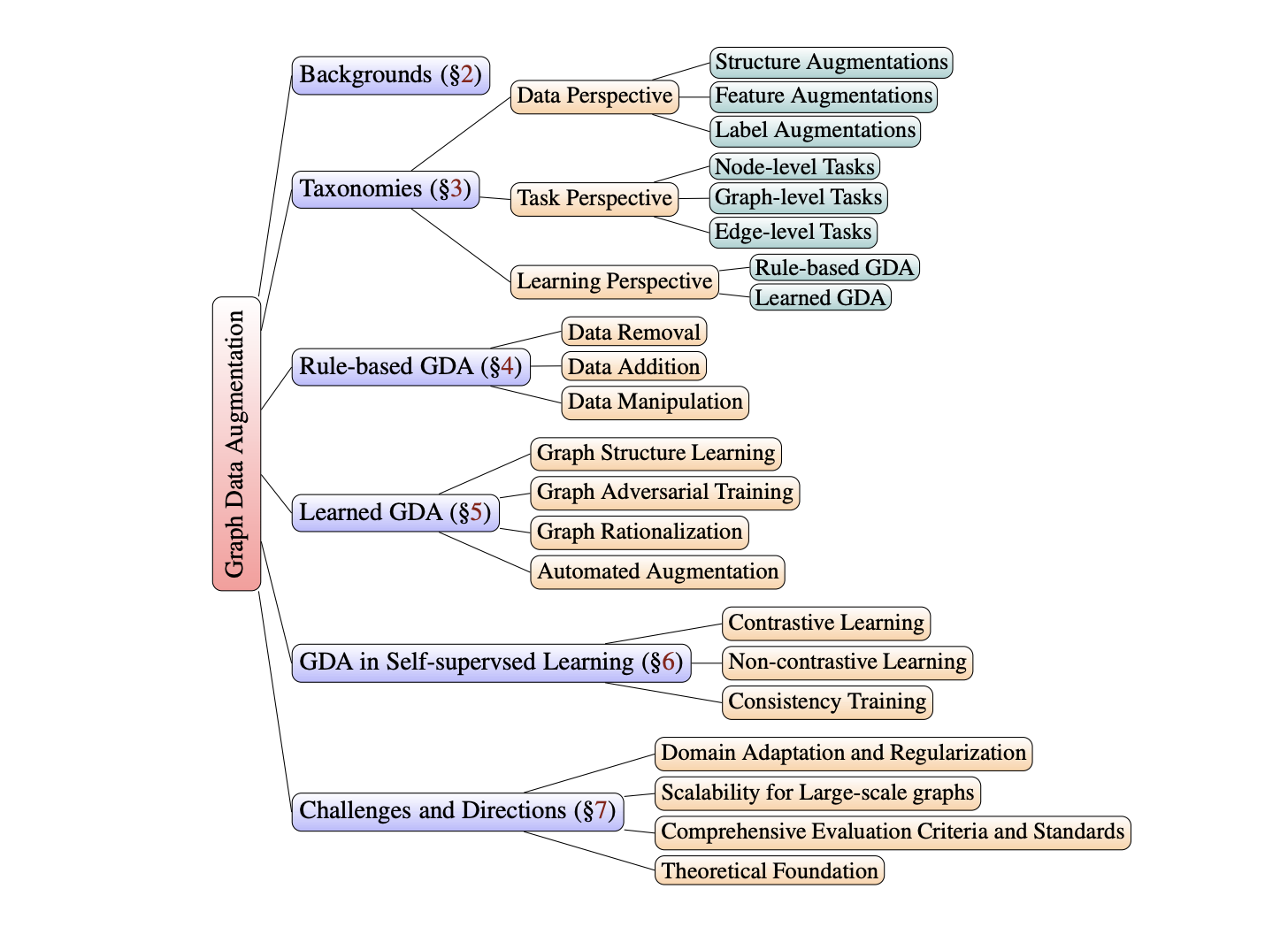

Retrieval-Augmented Generation with Graphs (GraphRAG)Haoyu Han, Yu Wang, Harry Shomer, Kai Guo, Jiayuan Ding, Yongjia Lei, Mahantesh Halappanavar, Ryan A. Rossi, and 10 more authorsarXiv preprint, 2025

Retrieval-Augmented Generation with Graphs (GraphRAG)Haoyu Han, Yu Wang, Harry Shomer, Kai Guo, Jiayuan Ding, Yongjia Lei, Mahantesh Halappanavar, Ryan A. Rossi, and 10 more authorsarXiv preprint, 2025Retrieval-augmented generation (RAG) is a powerful technique that enhances downstream task execution by retrieving additional information, such as knowledge, skills, and tools from external sources. Graph, by its intrinsic "nodes connected by edges" nature, encodes massive heterogeneous and relational information, making it a golden resource for RAG in tremendous real-world applications. As a result, we have recently witnessed increasing attention on equipping RAG with Graph, i.e., GraphRAG. However, unlike conventional RAG, where the retriever, generator, and external data sources can be uniformly designed in the neural-embedding space, the uniqueness of graph-structured data, such as diverse-formatted and domain-specific relational knowledge, poses unique and significant challenges when designing GraphRAG for different domains. Given the broad applicability, the associated design challenges, and the recent surge in GraphRAG, a systematic and up-to-date survey of its key concepts and techniques is urgently desired. Following this motivation, we present a comprehensive and up-to-date survey on GraphRAG. Our survey first proposes a holistic GraphRAG framework by defining its key components, including query processor, retriever, organizer, generator, and data source. Furthermore, recognizing that graphs in different domains exhibit distinct relational patterns and require dedicated designs, we review GraphRAG techniques uniquely tailored to each domain. Finally, we discuss research challenges and brainstorm directions to inspire cross-disciplinary opportunities. Our survey repository is publicly maintained at https://github.com/Graph-RAG/GraphRAG/.

- TheWebConf

Improving Out-of-Vocabulary Handling in Recommendation SystemsWilliam Shiao, Mingxuan Ju, Zhichun Guo, Xin Chen, Evangelos Papalexakis, Tong Zhao, Neil Shah, and Yozen LiuIn Resource Efficient Learning Workshop at The Web Conference, 2025

Improving Out-of-Vocabulary Handling in Recommendation SystemsWilliam Shiao, Mingxuan Ju, Zhichun Guo, Xin Chen, Evangelos Papalexakis, Tong Zhao, Neil Shah, and Yozen LiuIn Resource Efficient Learning Workshop at The Web Conference, 2025Recommendation systems (RS) are an increasingly relevant area for both academic and industry researchers, given their widespread impact on the daily online experiences of billions of users. One common issue in real RS is the cold-start problem, where users and items may not contain enough information to produce high-quality recommendations. This work focuses on a complementary problem: recommending new users and items unseen (out-of-vocabulary, or OOV) at training time. This setting is known as the inductive setting and is especially problematic for factorization-based models, which rely on encoding only those users/items seen at training time with fixed parameter vectors. Many existing solutions applied in practice are often naive, such as assigning OOV users/items to random buckets. In this work, we tackle this problem and propose approaches that better leverage available user/item features to improve OOV handling at the embedding table level. We discuss general-purpose plug-and-play approaches that are easily applicable to most RS models and improve inductive performance without negatively impacting transductive model performance. We extensively evaluate 9 OOV embedding methods on 5 models across 4 datasets (spanning different domains). One of these datasets is a proprietary production dataset from a prominent RS employed by a large social platform serving hundreds of millions of daily active users. In our experiments, we find that several proposed methods that exploit feature similarity using LSH consistently outperform alternatives on most model-dataset combinations, with the best method showing a mean improvement of 3.74% over the industry standard baseline in inductive performance. We release our code and hope our work helps practitioners make more informed decisions when handling OOV for their RS and further inspires academic research into improving OOV support in RS.

2024

- LoG

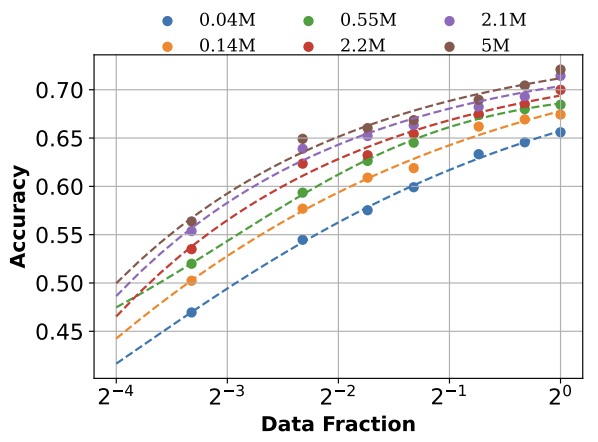

Towards Neural Scaling Laws on GraphsJingzhe Liu, Haitao Mao, Zhikai Chen, Tong Zhao, Neil Shah, and Jiliang TangIn Learning on Graphs Conference, 2024

Towards Neural Scaling Laws on GraphsJingzhe Liu, Haitao Mao, Zhikai Chen, Tong Zhao, Neil Shah, and Jiliang TangIn Learning on Graphs Conference, 2024Deep graph models (e.g., graph neural networks and graph transformers) have become important techniques for leveraging knowledge across various types of graphs. Yet, the neural scaling laws on graphs, i.e., how the performance of deep graph models changes with model and dataset sizes, have not been systematically investigated, casting doubts on the feasibility of achieving large graph models. To fill this gap, we benchmark many graph datasets from different tasks and make an attempt to establish the neural scaling laws on graphs from both model and data perspectives. The model size we investigated is up to 100 million parameters, and the dataset size investigated is up to 50 million samples. We first verify the validity of such laws on graphs, establishing proper formulations to describe the scaling behaviors. For model scaling, we identify that despite the parameter numbers, the model depth also plays an important role in affecting the model scaling behaviors, which differs from observations in other domains such as CV and NLP. For data scaling, we suggest that the number of graphs can not effectively measure the graph data volume in scaling law since the sizes of different graphs are highly irregular. Instead, we reform the data scaling law with the number of nodes or edges as the metric to address the irregular graph sizes. We further demonstrate that the reformed law offers a unified view of the data scaling behaviors for various fundamental graph tasks including node classification, link prediction, and graph classification. This work provides valuable insights into neural scaling laws on graphs, which can serve as an important tool for collecting new graph data and developing large graph models.

- NeurIPS

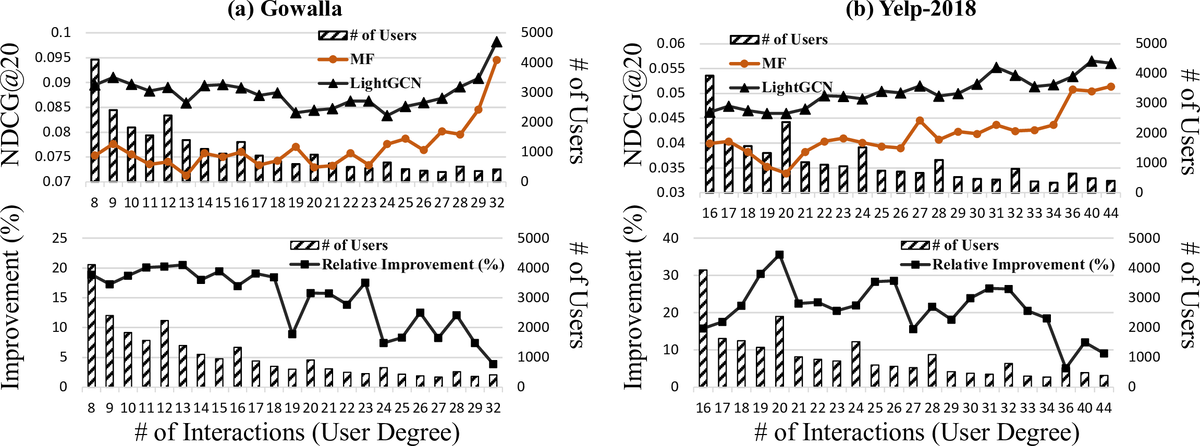

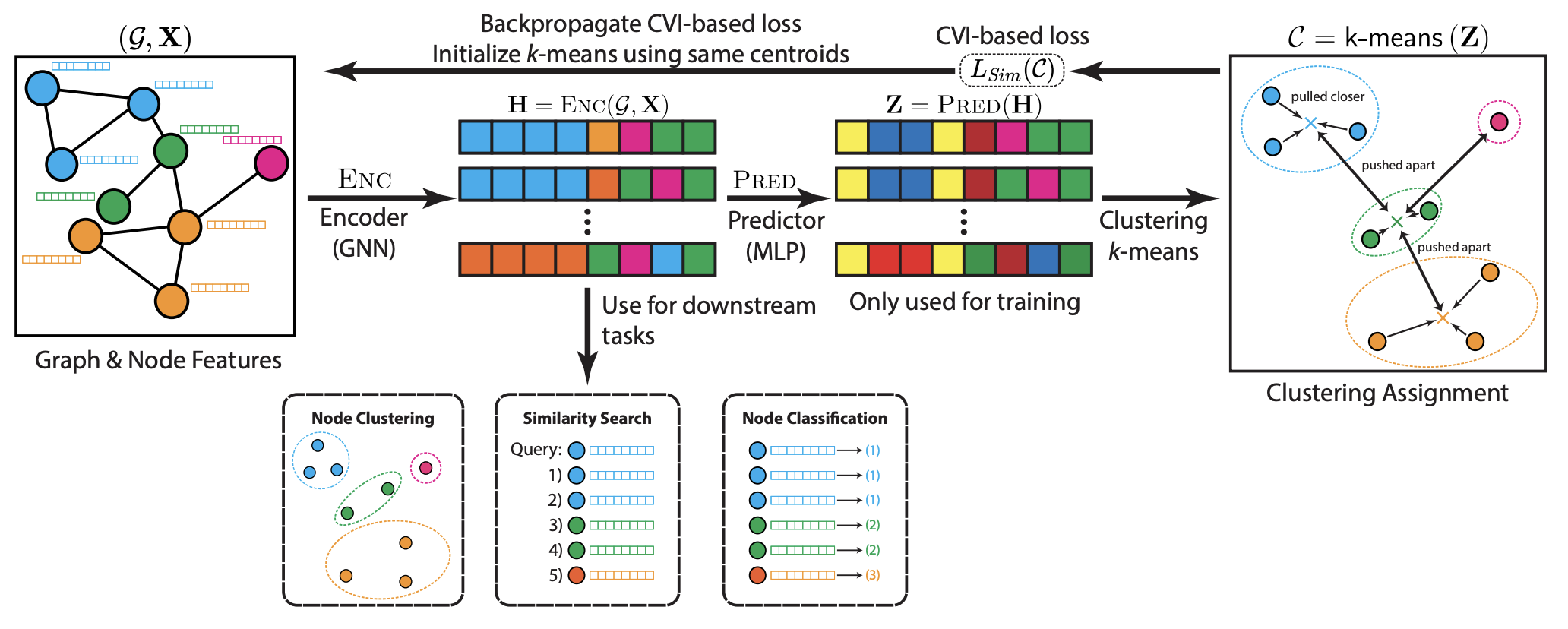

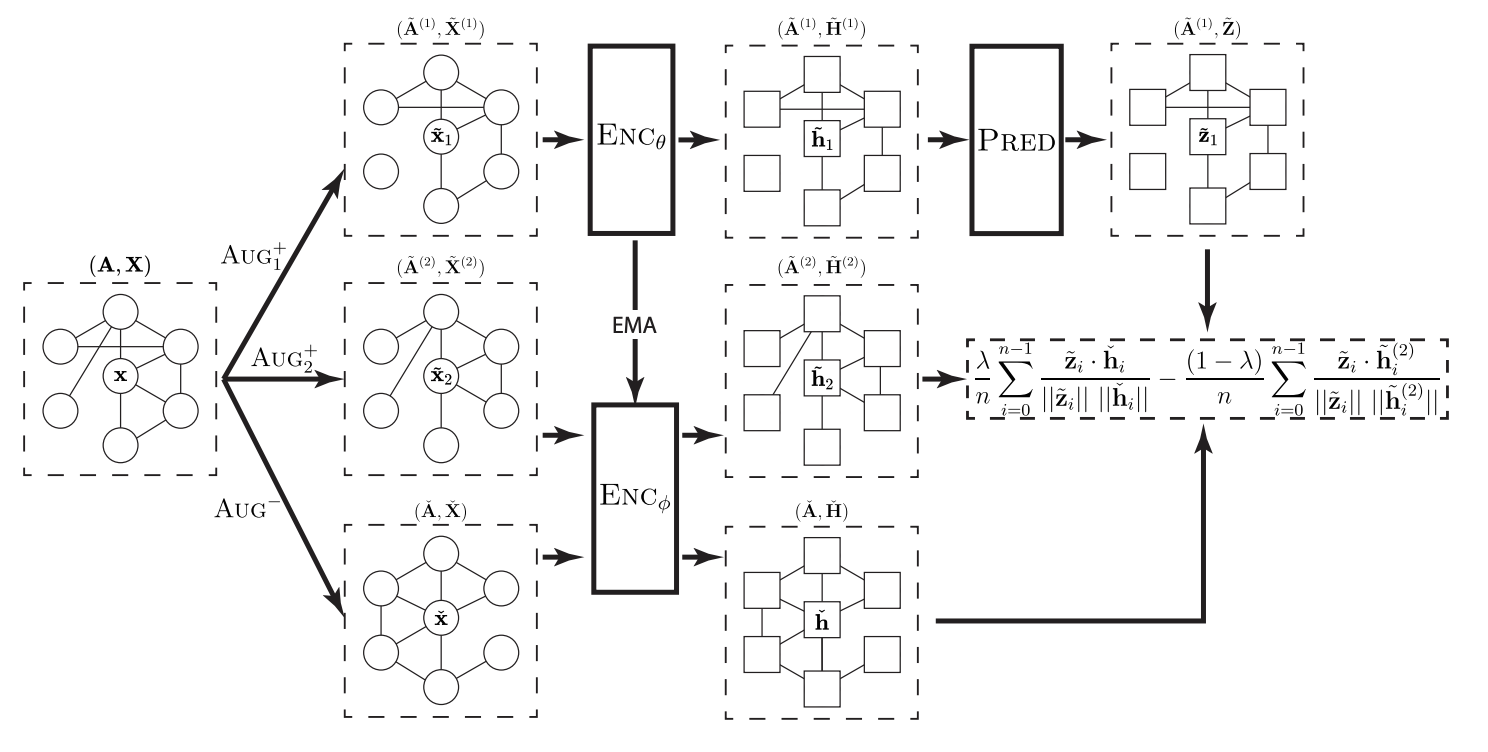

Test-time Aggregation for Collaborative FilteringMingxuan Ju, William Shiao, Zhichun Guo, Yanfang Ye, Yozen Liu, Neil Shah, and Tong ZhaoIn Conference on Neural Information Processing Systems, 2024

Test-time Aggregation for Collaborative FilteringMingxuan Ju, William Shiao, Zhichun Guo, Yanfang Ye, Yozen Liu, Neil Shah, and Tong ZhaoIn Conference on Neural Information Processing Systems, 2024Collaborative filtering (CF) has exhibited prominent results for recommender systems and been broadly utilized for real-world applications. A branch of research enhances CF methods by message passing used in graph neural networks, due to its strong capabilities of extracting knowledge from graph-structured data, like user-item bipartite graphs that naturally exist in CF. They assume that message passing helps CF methods in a manner akin to its benefits for graph-based learning tasks in general. However, even though message passing empirically improves CF, whether or not this assumption is correct still needs verification. To address this gap, we formally investigate why message passing helps CF from multiple perspectives and show that many assumptions made by previous works are not entirely accurate. With our curated ablation studies and theoretical analyses, we discover that (1) message passing improves the CF performance primarily by additional representations passed from neighbors during the forward pass instead of additional gradient updates to neighbor representations during the model back-propagation and (ii) message passing usually helps low-degree nodes more than high-degree nodes. Utilizing these novel findings, we present Test-time Aggregation for CF, namely TAG-CF, a test-time augmentation framework that only conducts message passing once at inference time. The key novelty of TAG-CF is that it effectively utilizes graph knowledge while circumventing most of notorious computational overheads of message passing. Besides, TAG-CF is extremely versatile can be used as a plug-and-play module to enhance representations trained by different CF supervision signals. Evaluated on six datasets, TAG-CF consistently improves the recommendation performance of CF methods without graph by up to 39.2% on cold users and 31.7% on all users, with little to no extra computational overheads.

- RecSys

Robust Training Objectives Improve Embedding-based Retrieval in Industrial Recommendation SystemsMatthew Kolodner, Mingxuan Ju, Zihao Fan, Tong Zhao, Elham Ghazizadeh, Yan Wu, Neil Shah, and Yozen LiuIn RobustRecSys Workshop @ ACM Conference on Recommender Systems, 2024

Robust Training Objectives Improve Embedding-based Retrieval in Industrial Recommendation SystemsMatthew Kolodner, Mingxuan Ju, Zihao Fan, Tong Zhao, Elham Ghazizadeh, Yan Wu, Neil Shah, and Yozen LiuIn RobustRecSys Workshop @ ACM Conference on Recommender Systems, 2024Graph Foundation Models (GFMs) are emerging as a significant research topic in the graph domain, aiming to develop graph models trained on extensive and diverse data to enhance their applicability across various tasks and domains. Developing GFMs presents unique challenges over traditional Graph Neural Networks (GNNs), which are typically trained from scratch for specific tasks on particular datasets. The primary challenge in constructing GFMs lies in effectively leveraging vast and diverse graph data to achieve positive transfer. Drawing inspiration from existing foundation models in the CV and NLP domains, we propose a novel perspective for the GFM development by advocating for a “graph vocabulary”, in which the basic transferable units underlying graphs encode the invariance on graphs. We ground the graph vocabulary construction from essential aspects including network analysis, expressiveness, and stability. Such a vocabulary perspective can potentially advance the future GFM design in line with the neural scaling laws. All relevant resources with GFM design can be found here.

- ICML

Graph Foundation ModelsHaitao Mao, Zhikai Chen, Wenzhuo Tang, Jianan Zhao, Yao Ma, Tong Zhao, Neil Shah, Mikhail Galkin, and 1 more authorIn International Conference on Machine Learning, 2024

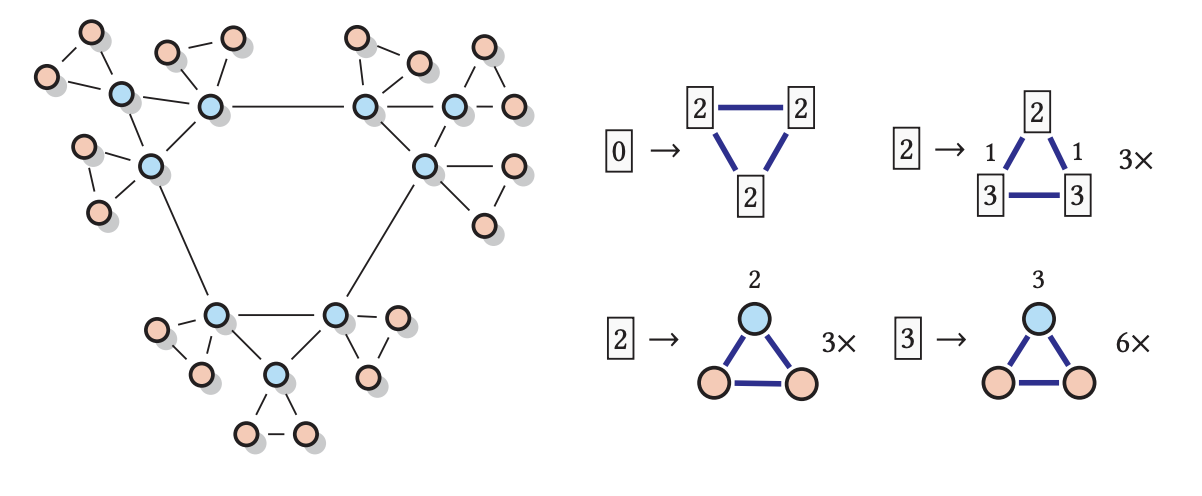

Graph Foundation ModelsHaitao Mao, Zhikai Chen, Wenzhuo Tang, Jianan Zhao, Yao Ma, Tong Zhao, Neil Shah, Mikhail Galkin, and 1 more authorIn International Conference on Machine Learning, 2024Graph Foundation Models (GFMs) are emerging as a significant research topic in the graph domain, aiming to develop graph models trained on extensive and diverse data to enhance their applicability across various tasks and domains. Developing GFMs presents unique challenges over traditional Graph Neural Networks (GNNs), which are typically trained from scratch for specific tasks on particular datasets. The primary challenge in constructing GFMs lies in effectively leveraging vast and diverse graph data to achieve positive transfer. Drawing inspiration from existing foundation models in the CV and NLP domains, we propose a novel perspective for the GFM development by advocating for a “graph vocabulary”, in which the basic transferable units underlying graphs encode the invariance on graphs. We ground the graph vocabulary construction from essential aspects including network analysis, expressiveness, and stability. Such a vocabulary perspective can potentially advance the future GFM design in line with the neural scaling laws. All relevant resources with GFM design can be found here.

- ICML

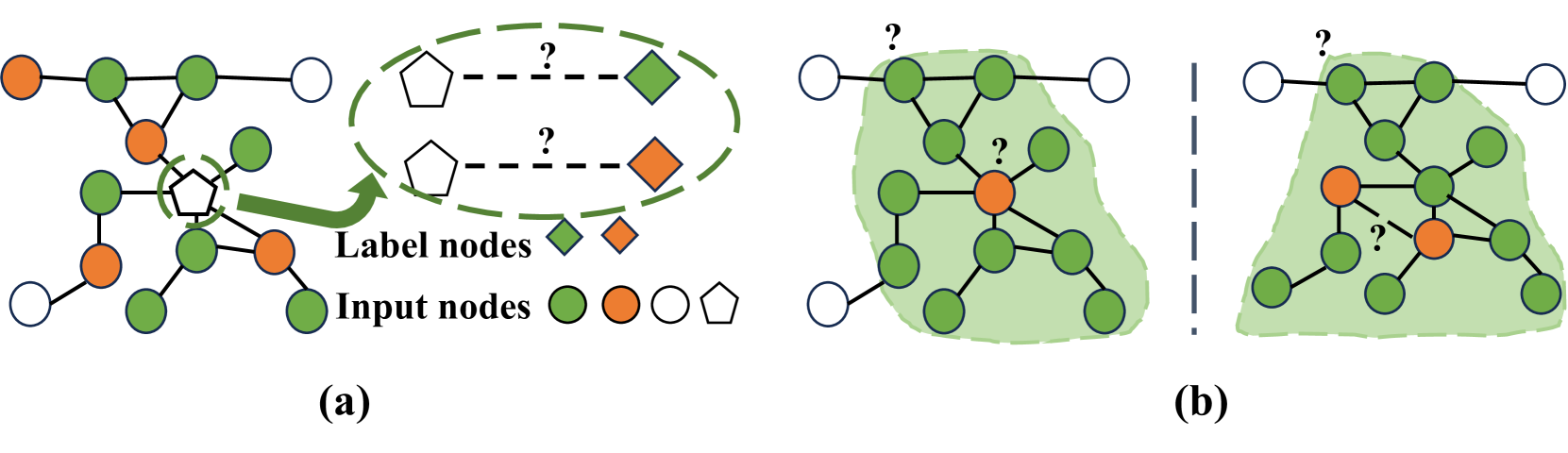

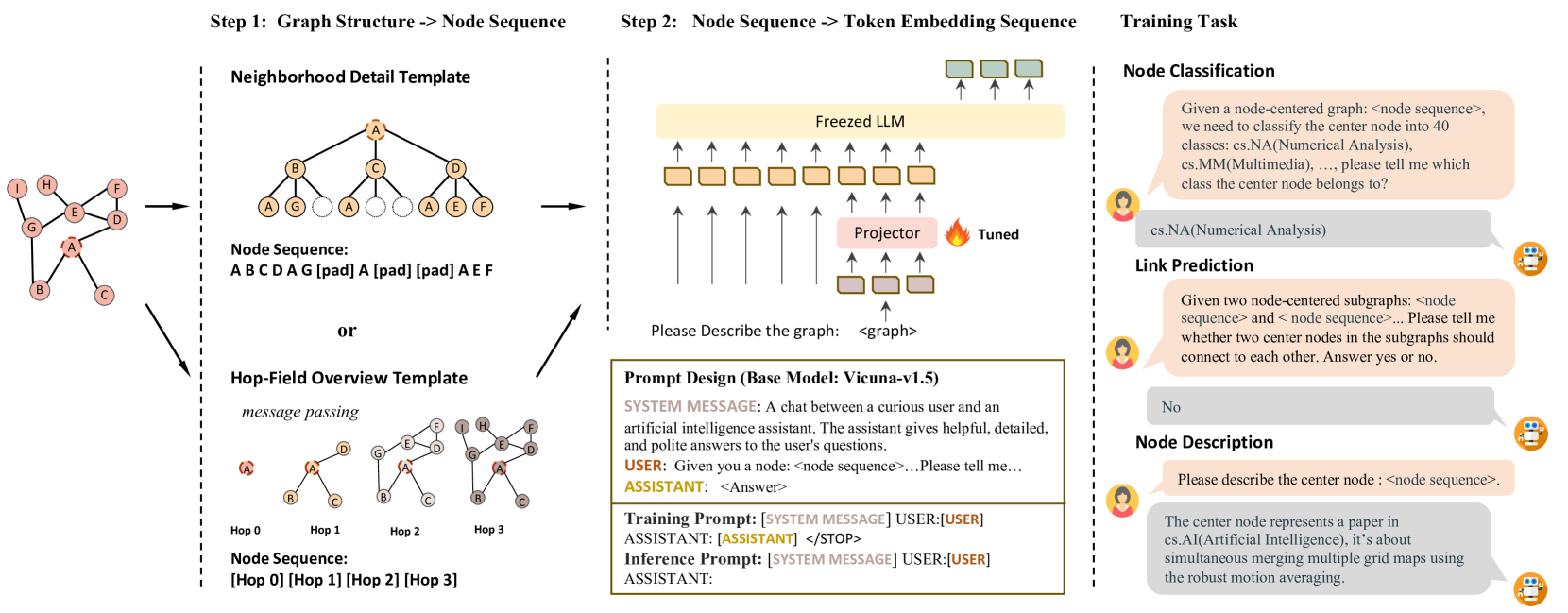

LLaGA: Large Language and Graph AssistantRunjin Chen, Tong Zhao, Ajay Kumar Jaiswal, Neil Shah, and Zhangyang WangIn International Conference on Machine Learning, 2024

LLaGA: Large Language and Graph AssistantRunjin Chen, Tong Zhao, Ajay Kumar Jaiswal, Neil Shah, and Zhangyang WangIn International Conference on Machine Learning, 2024Graph Neural Networks (GNNs) have empowered the advance in graph-structured data analysis. Recently, the rise of Large Language Models (LLMs) like GPT-4 has heralded a new era in deep learning. However, their application to graph data poses distinct challenges due to the inherent difficulty of translating graph structures to language. To this end, we introduce the Large Language and Graph Assistant (LLaGA), an innovative model that effectively integrates LLM capabilities to handle the complexities of graph-structured data. LLaGA retains the general-purpose nature of LLMs while adapting graph data into a format compatible with LLM input. LLaGA achieves this by reorganizing graph nodes to structure-aware sequences and then mapping these into the token embedding space through a versatile projector. LLaGA excels in versatility, generalizability and interpretability, allowing it to perform consistently well across different datasets and tasks, extend its ability to unseen datasets or tasks, and provide explanations for graphs. Our extensive experiments across popular graph benchmarks show that LLaGA delivers outstanding performance across four datasets and three tasks using one single model, surpassing state-of-the-art graph models in both supervised and zero-shot scenarios. Our code is available at \url{https://github.com/VITA-Group/LLaGA}.

- ACL

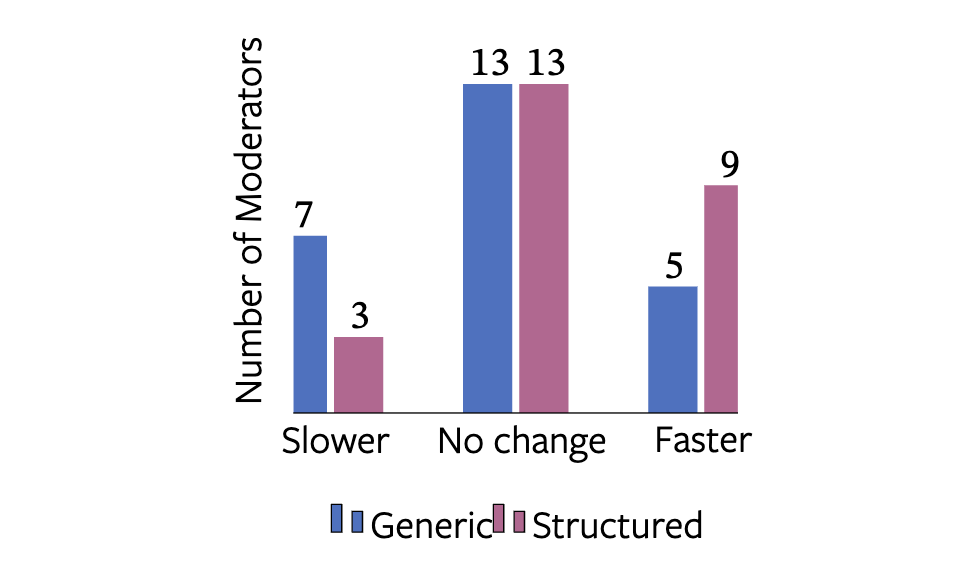

Explainability and Hate Speech: Structured Explanations Make Social Media Moderators FasterAgostina Calabrese, Leonardo Neves, Neil Shah, Maarten Bos, Björn Ross, Mirella Lapata, and Francesco BarbieriIn Annual Meeting of the Association for Computational Linguistics, 2024

Explainability and Hate Speech: Structured Explanations Make Social Media Moderators FasterAgostina Calabrese, Leonardo Neves, Neil Shah, Maarten Bos, Björn Ross, Mirella Lapata, and Francesco BarbieriIn Annual Meeting of the Association for Computational Linguistics, 2024Content moderators play a key role in keeping the conversation on social media healthy. While the high volume of content they need to judge represents a bottleneck to the moderation pipeline, no studies have explored how models could support them to make faster decisions. There is, by now, a vast body of research into detecting hate speech, sometimes explicitly motivated by a desire to help improve content moderation, but published research using real content moderators is scarce. In this work we investigate the effect of explanations on the speed of real-world moderators. Our experiments show that while generic explanations do not affect their speed and are often ignored, structured explanations lower moderators’ decision making time by 7.4%.

- ICLR

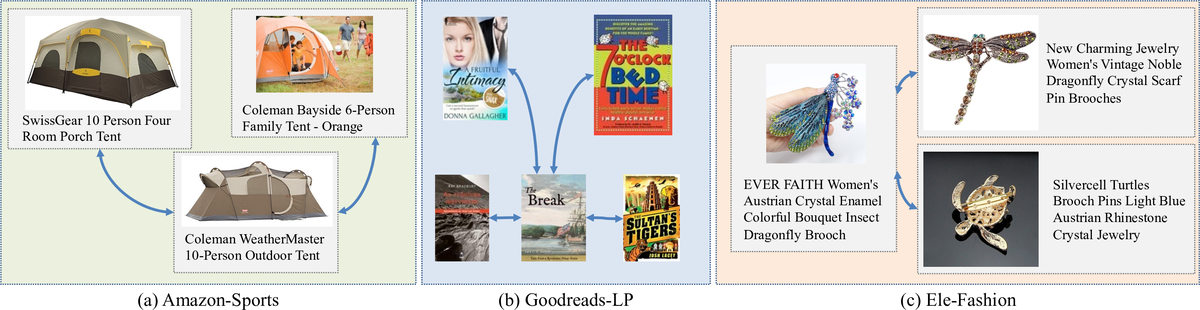

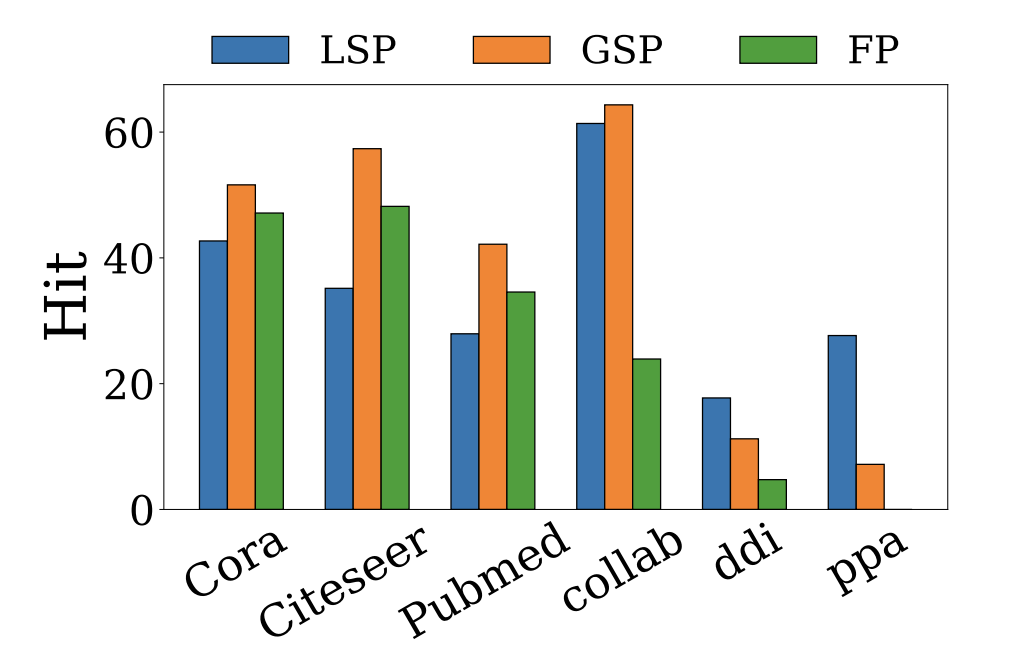

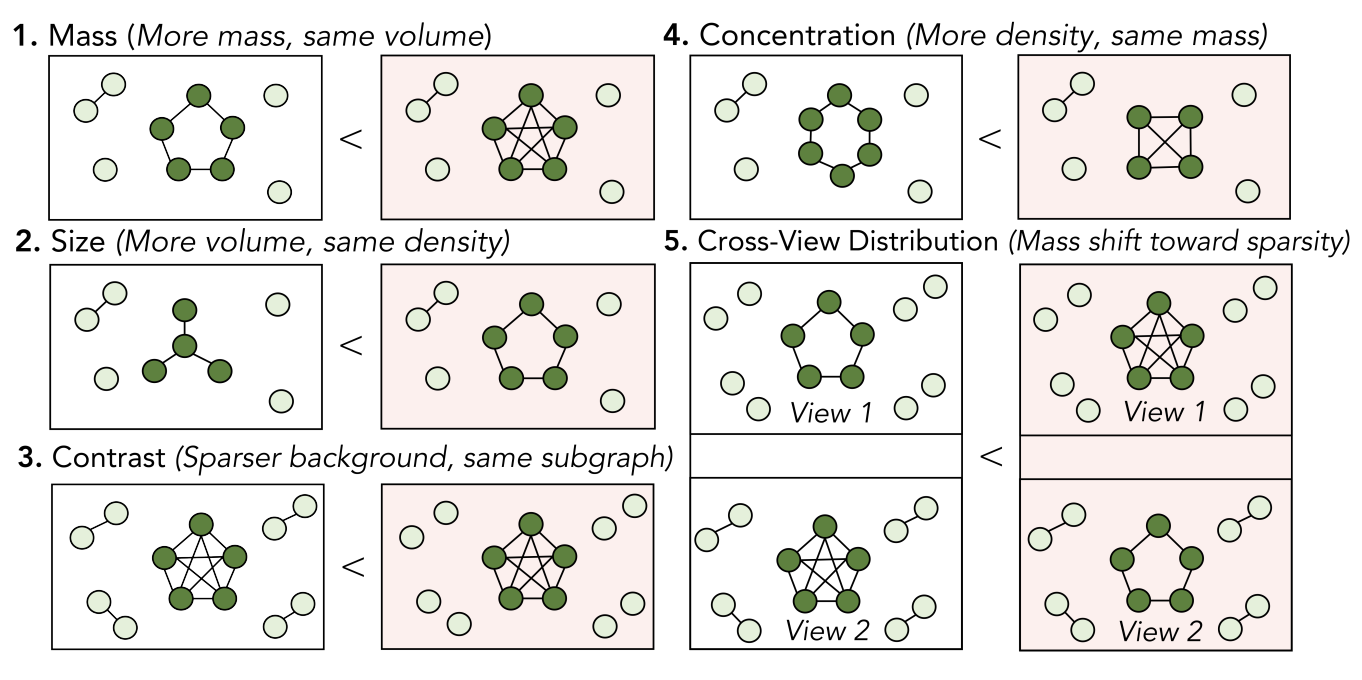

Revisiting Link Prediction: A Data PerspectiveHaitao Mao, Juanhui Li, Harry Shomer, Bingheng Li, Wenqi Fan, Yao Ma, Tong Zhao, Neil Shah, and 1 more authorIn International Conference on Learning Representations, 2024

Revisiting Link Prediction: A Data PerspectiveHaitao Mao, Juanhui Li, Harry Shomer, Bingheng Li, Wenqi Fan, Yao Ma, Tong Zhao, Neil Shah, and 1 more authorIn International Conference on Learning Representations, 2024Link prediction, a fundamental task on graphs, has proven indispensable in various applications, e.g., friend recommendation, protein analysis, and drug interaction prediction. However, since datasets span a multitude of domains, they could have distinct underlying mechanisms of link formation. Evidence in existing literature underscores the absence of a universally best algorithm suitable for all datasets. In this paper, we endeavor to explore principles of link prediction across diverse datasets from a data-centric perspective. We recognize three fundamental factors critical to link prediction: local structural proximity, global structural proximity, and feature proximity. We then unearth relationships among those factors where (i) global structural proximity only shows effectiveness when local structural proximity is deficient. (ii) The incompatibility can be found between feature and structural proximity. Such incompatibility leads to GNNs for Link Prediction (GNN4LP) consistently underperforming on edges where the feature proximity factor dominates. Inspired by these new insights from a data perspective, we offer practical instruction for GNN4LP model design and guidelines for selecting appropriate benchmark datasets for more comprehensive evaluations.

- ICLR

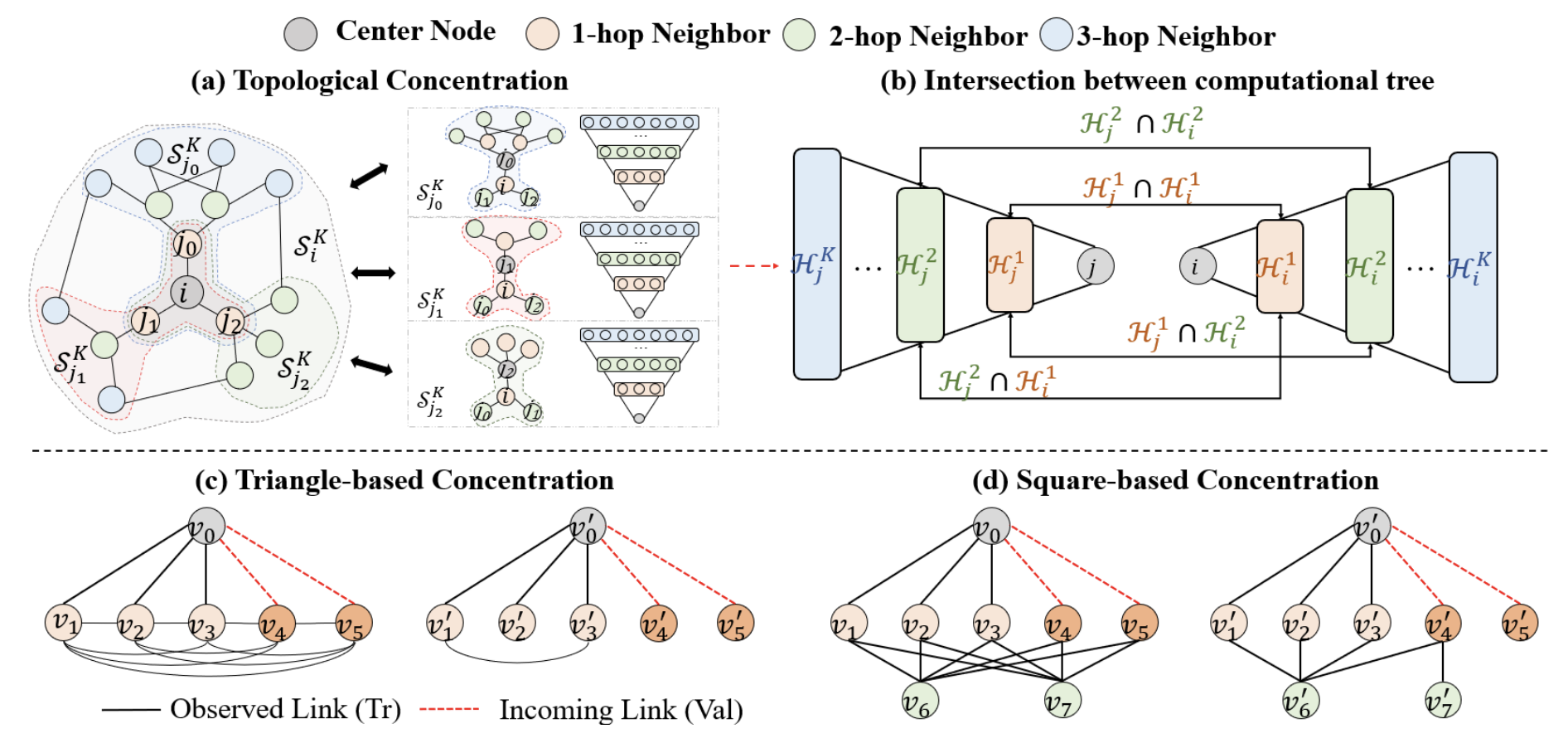

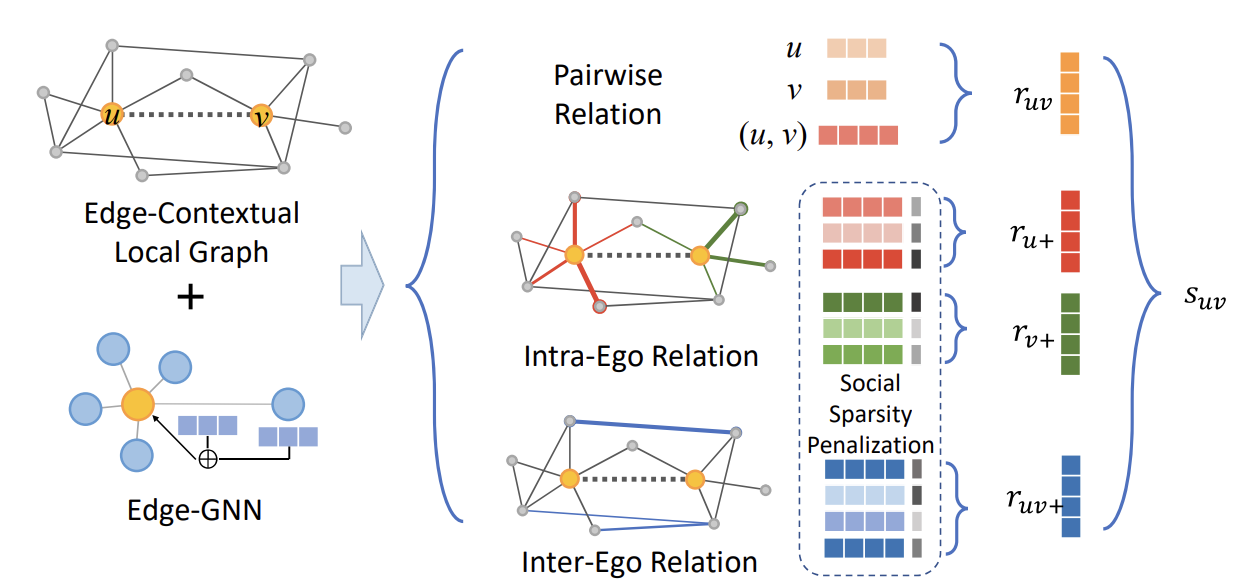

A Topological Perspective on Demystifying GNN-based Link Prediction PerformanceYu Wang, Tong Zhao, Yuying Zhao, Yunchao Liu, Xueqi Cheng, Neil Shah, and Tyler DerrIn International Conference on Learning Representations, 2024